Remote QA Engineer Jobs: Complete 2026 Career Guide

Everything you need to land a remote QA engineer job. Test automation, manual testing, SDET - salary data, interview questions, and companies hiring.

Updated January 20, 2026 • Verified current for 2026

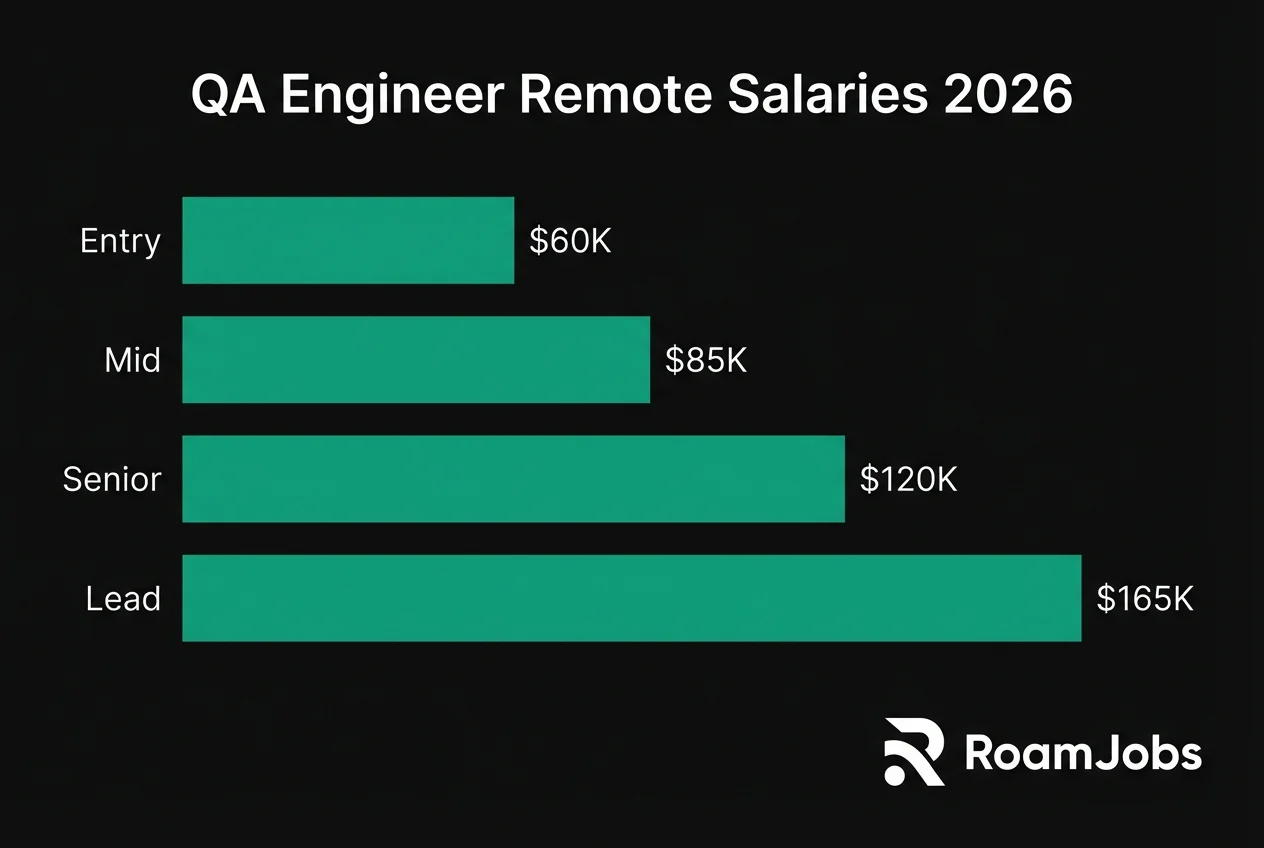

Remote QA Engineers ensure software quality through systematic testing, bug detection, and quality assurance processes—all from anywhere in the world. With salaries ranging from $60,000 to $165,000 for US-based remote positions (and up to $220K+ for QA Directors), this career offers excellent remote work opportunities due to its measurable outputs and asynchronous workflow compatibility. The role spans a spectrum from manual testing specialists to automation engineers and SDETs (Software Development Engineers in Test), with a clear career path from entry-level positions requiring no coding to senior automation architects designing test frameworks. In 2026, the most in-demand QA professionals combine strong testing fundamentals with automation skills in tools like Playwright, Cypress, and Selenium, plus experience with CI/CD integration and API testing.

What Does a Remote QA Engineer Do?

Quality Assurance Engineering is the discipline of ensuring software meets specified requirements and user expectations before it reaches production. Remote QA Engineers work within distributed teams to design test strategies, execute test cases, automate repetitive testing tasks, and collaborate with developers to resolve defects—all without ever stepping into an office.

Day-to-Day Responsibilities

The daily work of a remote QA Engineer varies significantly based on seniority and specialization, but typically includes:

Test Planning and Strategy

- Reviewing product requirements and user stories to identify testable acceptance criteria

- Creating comprehensive test plans that cover functional, integration, and regression scenarios

- Collaborating asynchronously with product managers and developers to clarify requirements

- Estimating testing effort for sprint planning and release cycles

Test Execution and Automation

- Writing and maintaining automated test scripts for web, mobile, and API testing

- Executing manual exploratory testing sessions to find edge cases automation might miss

- Running regression test suites before releases and validating bug fixes

- Maintaining and improving test frameworks and infrastructure

Defect Management and Communication

- Documenting bugs with detailed reproduction steps, logs, and screenshots

- Triaging defects based on severity and business impact

- Collaborating with developers through code review comments and async communication

- Providing quality metrics and test coverage reports to stakeholders

Continuous Improvement

- Identifying opportunities to automate repetitive manual tests

- Researching new testing tools and methodologies

- Contributing to testing best practices documentation

- Mentoring junior QA team members on testing strategies

QA Engineer vs SDET vs QA Analyst: Understanding the Differences

The quality assurance field encompasses several distinct roles, and understanding their differences helps you target the right opportunities.

QA Role Comparison

Source: RoamJobs 2026 QA Salary Report| Role | Coding Requirement | Primary Focus | Typical Salary (US Senior) | Remote Availability |

|---|---|---|---|---|

| QA Analyst | Minimal/None | Test documentation, manual testing, process | $65K-$95K | High |

| Manual QA Engineer | Basic scripting | Exploratory testing, test case design | $70K-$100K | High |

| QA Automation Engineer | Strong coding | Building and maintaining test automation | $115K-$165K | Very High |

| SDET | Expert-level coding | Test infrastructure, framework development | $130K-$180K | Very High |

| QA Lead/Manager | Moderate | Team leadership, strategy, process | $140K-$200K | High |

Data compiled from RoamJobs 2026 QA Salary Report. Last verified January 2026.

QA Analyst focuses primarily on test documentation, process improvement, and coordinating testing activities. This role emphasizes communication and organizational skills over technical implementation, making it an excellent entry point for those without programming backgrounds.

Manual QA Engineer specializes in exploratory testing and manual test execution. While automation is increasingly expected, many companies still need skilled manual testers who can find bugs that automated tests miss through creative, human-driven testing approaches.

QA Automation Engineer writes code to automate test execution, typically focusing on UI testing, API testing, and integration testing. This role requires solid programming skills and knowledge of test frameworks like Selenium, Cypress, or Playwright.

SDET (Software Development Engineer in Test) combines strong software development skills with testing expertise. SDETs build test infrastructure, create custom testing tools, and often contribute to the main codebase. They’re essentially developers who specialize in quality.

QA Lead/Manager oversees QA teams, defines testing strategies, and ensures quality processes align with business goals. This role requires a blend of technical knowledge and leadership skills.

Why QA Is Well-Suited for Remote Work

Quality assurance ranks among the most remote-friendly engineering disciplines for several compelling reasons:

Measurable, Asynchronous Output QA work produces tangible, measurable artifacts: test cases written, bugs found, automation coverage percentages, and quality metrics. This output-based nature makes it easy for remote teams to evaluate performance without requiring constant real-time presence.

Documentation-Centric Workflow QA inherently involves extensive documentation—test plans, bug reports, test case specifications, and quality reports. This documentation-heavy workflow translates naturally to asynchronous remote collaboration, where written communication is the norm.

Independent Execution Much of QA work can be executed independently once requirements are understood. A QA engineer can run test suites, investigate bugs, and write automation code without needing continuous synchronous interaction with teammates.

Clear Handoff Points The QA workflow has well-defined handoff points: developers complete features, QA tests them, bugs are filed, fixes are verified. These discrete phases work well in distributed teams with timezone differences.

Tool-Based Collaboration Modern QA workflows rely heavily on tools like Jira, TestRail, GitHub, and CI/CD platforms that enable asynchronous collaboration. Test results, bug reports, and test coverage metrics are accessible to anyone, anywhere.

Salary Breakdown by Seniority Level

Remote QA Engineering salaries vary significantly based on experience, specialization, and geographic location. Understanding these ranges helps you negotiate effectively and plan your career progression.

QA Engineer Salary by Experience & Location

| Level | 🇺🇸 US Remote | 🇪🇺 EU Remote | 🌎 LATAM | 🌏 Asia |

|---|---|---|---|---|

| Entry Level (0-2 yrs) | $60,000 - $82,000 | $38,000 - $55,000 | $22,000 - $40,000 | $18,000 - $35,000 |

| Mid-Level (2-5 yrs) | $85,000 - $120,000 | $55,000 - $82,000 | $38,000 - $62,000 | $32,000 - $55,000 |

| Senior (5-8 yrs) | $115,000 - $165,000 | $78,000 - $115,000 | $55,000 - $90,000 | $48,000 - $78,000 |

| Lead/Director (8+ yrs) | $150,000 - $220,000 | $105,000 - $155,000 | $75,000 - $120,000 | $65,000 - $105,000 |

* Salaries represent base compensation for remote positions. Actual compensation may vary based on company, experience, and specific location within region.

Career Levels: From Entry to Director

Entry Level / Junior QA Engineer

0-2 years experience

Breaking Into Remote QA

Entry-level QA positions are among the most accessible paths into the tech industry, often requiring no formal computer science degree. However, competition for remote junior roles is fierce, so standing out requires intentional preparation.

Skills to Develop:

- Test case design and documentation using industry-standard formats

- Bug reporting with clear reproduction steps and severity classification

- Basic SQL queries for database validation

- Understanding of software development lifecycle (SDLC) and Agile methodologies

- Familiarity with test management tools like TestRail, Zephyr, or qTest

- Basic API testing concepts using tools like Postman

- Introduction to at least one programming language (Python or JavaScript recommended)

How to Break In Without Experience:

- Complete an ISTQB Foundation certification - This internationally recognized credential validates your testing fundamentals and helps bypass resume filters

- Build a testing portfolio - Find open-source projects and contribute documented bug reports or test cases

- Practice on real applications - Use bug bounty platforms or test public beta applications to build practical experience

- Learn basic automation - Even entry-level roles increasingly expect familiarity with automation concepts

- Network in QA communities - Join Ministry of Testing, QA-focused Slack groups, and LinkedIn communities

Entry-Level Job Search Strategy: Focus on companies with established QA training programs, startups building their first QA teams, and agencies that offer exposure to multiple projects. Titles to search for include: Junior QA Engineer, QA Analyst, Associate QA Engineer, and Test Engineer I.

Remote-Specific Considerations: Entry-level remote positions are more competitive than on-site roles because companies receive applications globally. Differentiate yourself by demonstrating strong written communication skills, self-motivation, and familiarity with remote collaboration tools.

Mid-Level QA Engineer

2-5 years experience

The Automation Transition Point

Mid-level QA positions mark the critical transition from manual testing to automation. This is where the career path diverges: those who develop strong automation skills see significant salary increases, while those remaining purely manual face a more limited market.

Core Competencies Expected:

- Proficient in at least one automation framework (Selenium, Cypress, Playwright, or Appium)

- Strong programming skills in Python, JavaScript, or Java for test development

- API testing automation using REST Assured, Postman/Newman, or similar tools

- CI/CD integration: running tests in Jenkins, GitHub Actions, or GitLab CI

- Performance testing basics with tools like JMeter, k6, or Gatling

- Database testing with complex SQL queries and data validation

- Version control proficiency with Git workflows

Automation Skills to Prioritize:

- Page Object Model (POM) and other design patterns for maintainable test code

- Data-driven testing approaches for scaling test coverage

- Parallel test execution for faster feedback cycles

- Test reporting with tools like Allure, ExtentReports, or custom dashboards

- Docker basics for consistent test environments

Career Growth Tactics:

- Lead automation initiatives - Volunteer to automate a manual test suite and document the ROI

- Specialize strategically - Become the go-to person for mobile testing, API testing, or performance testing

- Contribute to test architecture - Help design frameworks, not just write tests

- Mentor junior testers - Leadership experience accelerates promotion to senior roles

- Present your work - Share testing strategies in team meetings, write internal documentation

Salary Negotiation at Mid-Level: At this stage, your automation skills directly impact compensation. Quantify your impact: “I automated 500 regression tests, reducing manual testing time by 20 hours per sprint.” Companies pay premium rates for engineers who can demonstrate both testing expertise and coding ability.

Senior QA Engineer

5-8 years experience

Technical Leadership and Architecture

Senior QA Engineers are technical leaders who shape testing strategy, mentor team members, and make architectural decisions about test infrastructure. At this level, you’re expected to drive quality initiatives independently and influence engineering culture.

Technical Expertise Required:

- Designing and implementing test automation frameworks from scratch

- Test architecture decisions: when to use unit, integration, E2E, and contract testing

- Advanced CI/CD pipeline optimization for testing workflows

- Performance and load testing strategy for distributed systems

- Security testing fundamentals and integration into QA processes

- Mobile testing expertise across iOS and Android platforms

- Cross-browser and cross-device testing strategies

Leadership Responsibilities:

- Defining testing standards and best practices for engineering teams

- Code reviewing test automation from junior and mid-level engineers

- Collaborating with developers on testability improvements

- Estimating QA effort for large projects and communicating risks

- Interviewing and evaluating QA candidates

- Driving quality metrics and reporting to stakeholders

Advanced Skills for Senior Roles:

- Contract testing with Pact or similar tools for microservices

- Visual regression testing with Percy, Applitools, or BackstopJS

- Accessibility testing automation and WCAG compliance

- Test environment management including infrastructure as code

- Shift-left testing practices and developer enablement

Path to Staff/Principal: The trajectory from Senior to Staff/Principal QA Engineer requires demonstrating impact beyond your immediate team. This includes:

- Leading cross-team quality initiatives

- Establishing company-wide testing standards

- Contributing to open-source testing tools

- Speaking at conferences or publishing technical content

- Influencing product and engineering decisions through quality advocacy

Lead / Director QA

8+ years experience

QA Leadership and Management

QA Directors and Managers combine deep technical expertise with people leadership, setting quality strategy for entire organizations. These roles are increasingly available remotely as companies embrace distributed leadership.

Strategic Responsibilities:

- Defining quality strategy aligned with business objectives

- Building and scaling QA teams across time zones

- Budget management for tools, infrastructure, and team growth

- Establishing quality metrics and KPIs for executive reporting

- Vendor evaluation and management for testing tools and services

- Risk assessment and quality governance for major releases

People Management Skills:

- Hiring, onboarding, and retaining remote QA talent

- Performance management and career development for team members

- Building inclusive team culture across distributed teams

- Managing stakeholder relationships with product, engineering, and executives

- Handling difficult conversations about quality tradeoffs

Technical Leadership at Scale:

- Evaluating and implementing testing tools across the organization

- Standardizing QA processes while allowing team autonomy

- Driving automation strategy and measuring ROI

- Ensuring security and compliance testing requirements are met

- Championing quality in architectural discussions

Remote Leadership Challenges: Managing QA teams remotely requires exceptional communication skills:

- Over-communicate context and decisions through documentation

- Create multiple touchpoints: 1:1s, team meetings, async updates

- Build trust through consistent availability and follow-through

- Foster team cohesion despite physical distance

- Balance synchronous meetings across time zones

Common Titles at This Level:

- QA Manager / QA Director

- Director of Quality Engineering

- Head of QA

- VP of Quality

- Principal QA Engineer (IC track)

Essential Skills and Tools

Success as a remote QA Engineer requires mastering both technical tools and soft skills that enable effective distributed collaboration.

Test Automation Frameworks

The test automation landscape has evolved significantly, with modern frameworks offering better developer experience and reliability than earlier tools. Here’s how the leading options compare:

Test Automation Framework Comparison

Source: RoamJobs 2026 Testing Tools Survey| Framework | Best For | Learning Curve | Language Support | Browser Support | Key Advantage |

|---|---|---|---|---|---|

| Playwright | Modern web apps | Medium | JS/TS, Python, Java, C# | All major + WebKit | Auto-waiting, trace viewer |

| Cypress | Frontend testing | Easy | JavaScript/TypeScript | Chrome, Firefox, Edge | Real-time reloading, debugging |

| Selenium | Enterprise, legacy | High | All major languages | All browsers | Industry standard, huge ecosystem |

| WebDriverIO | Flexible needs | Medium | JavaScript/TypeScript | All browsers + mobile | Extensibility, mobile support |

| Puppeteer | Chrome automation | Medium | JavaScript/TypeScript | Chrome, Firefox | Google-backed, fast execution |

Data compiled from RoamJobs 2026 Testing Tools Survey. Last verified January 2026.

Playwright has emerged as the leading modern framework in 2026, offering exceptional cross-browser support, built-in auto-waiting that eliminates flaky tests, and powerful debugging tools like the trace viewer. If you’re learning test automation today, Playwright is the recommended starting point.

Cypress remains popular for frontend-heavy applications, especially in React and Vue ecosystems. Its real-time reloading and time-travel debugging provide an excellent developer experience, though its architecture limits some advanced testing scenarios.

Selenium continues to dominate enterprise environments and remains essential knowledge for any QA professional. While newer frameworks offer better developer experience, Selenium’s massive ecosystem and language support make it irreplaceable for many organizations.

API Testing Tools

API testing is increasingly critical as modern applications rely on microservices and third-party integrations. Every QA engineer should be proficient in at least one API testing approach.

Postman remains the industry standard for manual API exploration and testing. Its collection runner, environment management, and Newman CLI integration make it valuable for both manual and automated workflows.

REST Assured (Java) provides a powerful domain-specific language for API test automation, integrating seamlessly with existing Java test frameworks and CI/CD pipelines.

Supertest (JavaScript) offers lightweight API testing for Node.js applications, often combined with Jest or Mocha for comprehensive test suites.

Playwright API Testing allows you to use the same framework for both UI and API tests, simplifying your testing stack and test maintenance.

Performance Testing Tools

Performance Testing Tools

Source: RoamJobs Testing Tools Survey 2026| Tool | Best For | Scripting Language | Cloud Options | Learning Curve |

|---|---|---|---|---|

| k6 | Modern load testing | JavaScript | Grafana Cloud k6 | Easy |

| JMeter | Enterprise testing | GUI/Groovy | BlazeMeter, others | Medium |

| Gatling | High-performance | Scala/Java | Gatling Enterprise | Medium-High |

| Locust | Python teams | Python | Locust Cloud | Easy |

| Artillery | Quick load tests | YAML/JavaScript | Artillery Cloud | Easy |

Data compiled from RoamJobs Testing Tools Survey 2026. Last verified January 2026.

k6 has become the preferred performance testing tool for modern engineering teams, offering a JavaScript-based scripting language that’s familiar to most developers, excellent CLI tooling, and seamless Grafana integration for metrics visualization.

JMeter remains widely used in enterprise environments, particularly for complex testing scenarios requiring its extensive plugin ecosystem.

Test Management and Collaboration Tools

Remote QA teams rely heavily on tools that enable asynchronous collaboration and clear documentation:

Test Management:

- TestRail - Industry-leading test case management with detailed reporting

- Zephyr - Jira-native test management for teams already in the Atlassian ecosystem

- qTest - Enterprise test management with strong traceability features

- TestMo - Modern, lightweight alternative gaining traction in startups

Bug Tracking:

- Jira - Dominant in enterprise, extensive customization options

- Linear - Modern alternative popular with remote-first companies

- GitHub Issues - Sufficient for smaller teams, close to code

Collaboration:

- Loom - Essential for async bug reproduction videos and demos

- Notion - Documentation and test case storage for smaller teams

- Confluence - Enterprise documentation, integrates with Jira

Certifications Worth Pursuing

Certifications can help you stand out, especially when transitioning into QA or seeking remote roles where credentials help establish credibility.

ISTQB (International Software Testing Qualifications Board) The most widely recognized QA certification globally. Start with the Foundation Level, then progress to Advanced Level certifications in Test Analyst, Test Automation Engineer, or Technical Test Analyst based on your specialization.

AWS Certified Developer or Solutions Architect Valuable for QA engineers working with cloud-native applications and understanding infrastructure where their applications run.

Certified Kubernetes Application Developer (CKAD) Relevant for QA engineers testing containerized applications and needing to understand deployment environments.

Tool-Specific Certifications:

- Selenium WebDriver certification (various providers)

- Appium Mobile Testing certification

- Postman API Fundamentals certification (free)

Note on Certifications: While certifications help with resume screening, practical skills demonstrated through portfolios, GitHub contributions, and interview performance ultimately matter more for landing roles.

Companies Hiring Remote QA Engineers

The remote QA job market is robust, with opportunities ranging from startups to established enterprises. Here are companies known for hiring remote QA talent.

Remote-First Companies with Strong Testing Cultures

GitLab - The gold standard for remote work and engineering practices. GitLab’s public handbook includes extensive QA documentation, and they hire QA engineers across all levels. Their testing philosophy emphasizes shift-left testing and developer responsibility for quality.

Automattic (WordPress, WooCommerce, Tumblr) - Fully distributed since founding, Automattic has dedicated QA roles supporting their suite of products. Known for excellent work-life balance and async-first culture.

Zapier - Workflow automation platform with a strong testing culture given their integration-heavy product. QA engineers work across web testing and API integration testing.

Elastic - Distributed company building Elasticsearch and related products. Strong engineering culture with opportunities in both manual and automation QA.

Canonical (Ubuntu) - Fully remote company needing QA across operating system, cloud, and IoT products. Linux expertise highly valued.

HashiCorp - Infrastructure tools company with complex testing requirements. Remote-first with positions in test automation and quality engineering.

Tech Companies with Remote QA Teams

Stripe - Financial infrastructure company with rigorous testing requirements. Remote positions available for experienced QA engineers, particularly in payment systems testing.

Shopify - E-commerce platform with “digital by default” policy. Extensive QA needs across web, mobile, and API testing.

Twilio - Cloud communications platform with strong remote culture. QA opportunities in API testing and communications systems.

Datadog - Monitoring platform with remote positions. Strong focus on performance testing and reliability engineering.

HubSpot - CRM platform with @flex work arrangements. Established QA teams with growth opportunities.

Coinbase - Cryptocurrency exchange requiring security-focused QA. Remote-first with emphasis on automation.

Vercel - Frontend cloud platform. Smaller team but growing, focused on web performance testing.

Supabase - Open-source Firebase alternative. Fully remote, building in the open with growing QA needs.

Agencies and Consultancies

Toptal - Premium freelance network that screens for top talent. Good option for experienced QA engineers seeking contract work.

Testlio - Managed testing services company hiring remote testers globally. Good entry point for those building experience.

Global App Testing - Crowdsourced testing platform offering flexible remote testing work.

Ubertesters - QA services company hiring remote manual and automation testers.

Finding Unlisted QA Opportunities

Many remote QA positions are never posted publicly. Here’s how to discover hidden opportunities:

- Search specialized remote job boards - Start with We Work Remotely and FlexJobs for curated remote QA listings, then check Wellfound for startup QA roles and LinkedIn Remote Jobs for enterprise positions

- Monitor company career pages directly - Set up alerts for target companies rather than relying solely on job boards

- Engage with QA communities - Ministry of Testing, QA subreddits, and testing-focused Slack groups often share opportunities before public posting

- LinkedIn networking - Connect with QA managers at target companies and engage with their content

- Open source contributions - Contributing to testing frameworks or tools can lead to job offers from companies using those tools

- Conference networking - Virtual testing conferences provide access to hiring managers and recruiters

Interview Preparation: Questions and Answers

Remote QA interviews typically include technical assessments, live testing exercises, and behavioral questions evaluating remote work readiness. Here’s a comprehensive guide to the questions you’ll face.

Test Case Design Questions

A comprehensive answer demonstrates systematic thinking about test coverage:

Positive Test Cases:

- Valid username and password → successful login

- Login with email vs username (if both supported)

- Login with “remember me” checkbox

- Login after password reset

Negative Test Cases:

- Invalid username with valid password

- Valid username with invalid password

- Both invalid

- Empty username and/or password

- SQL injection attempts in fields

- XSS payloads in input fields

Boundary and Edge Cases:

- Maximum length username/password

- Minimum length credentials

- Special characters in password

- Unicode characters in username

- Case sensitivity testing

Security Test Cases:

- Account lockout after failed attempts

- Session timeout after inactivity

- Secure transmission (HTTPS)

- Password masking in UI

- Login from multiple devices

Usability Test Cases:

- Tab order between fields

- Enter key submits form

- Error messages are clear and helpful

- Screen reader accessibility

The testing pyramid is a framework for structuring automated tests to maximize coverage while minimizing maintenance costs and execution time.

The Pyramid Structure:

Unit Tests (Base - 70%)

- Test individual functions and methods in isolation

- Run in milliseconds, provide fast feedback

- Developers typically write these

- QA influence: Ensure developers have unit test coverage targets

Integration Tests (Middle - 20%)

- Test interactions between components, services, and databases

- Validate API contracts and data flow

- Takes seconds to minutes to run

- QA owns API testing, database validation

End-to-End Tests (Top - 10%)

- Test complete user workflows through the UI

- Slowest and most brittle tests

- Reserve for critical business paths

- QA typically owns these entirely

Practical Application: In my experience, I advocate for:

- Pushing testing down: If something can be tested at a lower level, it should be

- Selective E2E coverage: Only critical user journeys get E2E tests

- Contract testing: Using tools like Pact to replace some integration tests

- Monitoring as testing: Using production monitoring to catch issues unit/integration tests miss

Common Anti-Patterns:

- Ice cream cone: Too many E2E tests, not enough unit tests

- Hourglass: Missing integration layer

- Over-testing: Same scenario tested at multiple levels

This scenario tests your ability to handle pressure, communicate effectively, and make risk-based decisions.

Immediate Actions (First 30 minutes):

- Reproduce the bug - Confirm it’s reproducible and understand exact conditions

- Assess severity - Determine user impact, data integrity risks, security implications

- Document thoroughly - Create a detailed bug report with reproduction steps

- Notify stakeholders - Alert the development team lead and product manager

- Check for workarounds - Identify if users can accomplish their goals another way

Investigation Phase:

- Identify root cause - Work with developers to understand what’s happening

- Determine scope - How many users affected? Which features impacted?

- Check for regressions - Was this working before? What changed?

- Review recent deployments - Identify potential cause from recent changes

Decision Support: Present options to decision-makers with risk analysis:

- Option A: Delay release to fix - Timeline, resource impact

- Option B: Release with known issue - User impact, reputation risk

- Option C: Rollback and investigate - Customer impact of rollback

- Option D: Hotfix before release - Risk of rushed fix

Post-Incident:

- Conduct root cause analysis

- Update test coverage to catch similar issues

- Document in runbook for future reference

- Implement monitoring for early detection

Strategic automation decisions balance ROI, maintenance costs, and testing effectiveness.

Automate When:

- Tests will run frequently (regression, smoke tests)

- Execution is repetitive and time-consuming

- Tests require precise timing or data validation

- Coverage needs to span multiple browsers/devices/environments

- Tests involve complex data setup that’s error-prone manually

- Results need to be consistent and comparable over time

Keep Manual When:

- Feature is still changing frequently

- Test requires human judgment (UX, visual design)

- One-time or rare testing needs

- Exploratory testing to find unexpected issues

- Automation cost exceeds benefit (complex, rare scenarios)

- Test requires physical device interaction automation can’t replicate

ROI Framework:

Calculate automation ROI: (Manual Time x Frequency) - (Automation Time + Maintenance)

For example:

- Manual test takes 30 minutes

- Run 10 times per sprint (2 weeks)

- Automation takes 4 hours to write

- Maintenance averages 30 minutes per sprint

ROI over 6 months: (30 min x 10 x 12 sprints) - (4 hrs + 30 min x 12) = 60 hrs saved

Prioritization Matrix:

- High value, Low complexity: Automate first

- High value, High complexity: Automate, invest in good framework

- Low value, Low complexity: Automate if time permits

- Low value, High complexity: Keep manual

This question assesses your coding ability, framework knowledge, and test design skills.

import { test, expect } from '@playwright/test';

test.describe('Shopping Cart Checkout', () => {

test.beforeEach(async ({ page }) => {

// Start with a clean state - logged in user with empty cart

await page.goto('/');

await page.getByRole('button', { name: 'Sign In' }).click();

await page.fill('[data-testid="email"]', process.env.TEST_USER_EMAIL);

await page.fill('[data-testid="password"]', process.env.TEST_USER_PASSWORD);

await page.click('[data-testid="submit-login"]');

await expect(page.getByText('Welcome back')).toBeVisible();

});

test('should complete checkout with valid payment', async ({ page }) => {

// Add product to cart

await page.goto('/products/test-product-123');

await page.click('[data-testid="add-to-cart"]');

await expect(page.getByTestId('cart-count')).toHaveText('1');

// Navigate to cart

await page.click('[data-testid="cart-icon"]');

await expect(page).toHaveURL('/cart');

// Verify cart contents

await expect(page.getByTestId('cart-item')).toHaveCount(1);

await expect(page.getByTestId('cart-total')).toContainText('$');

// Proceed to checkout

await page.click('[data-testid="checkout-button"]');

await expect(page).toHaveURL('/checkout');

// Fill shipping information

await page.fill('[data-testid="shipping-address"]', '123 Test Street');

await page.fill('[data-testid="shipping-city"]', 'Test City');

await page.selectOption('[data-testid="shipping-state"]', 'CA');

await page.fill('[data-testid="shipping-zip"]', '90210');

// Fill payment information (using test card)

await page.fill('[data-testid="card-number"]', '4242424242424242');

await page.fill('[data-testid="card-expiry"]', '12/28');

await page.fill('[data-testid="card-cvc"]', '123');

// Complete order

await page.click('[data-testid="place-order"]');

// Verify success

await expect(page).toHaveURL(/\/order-confirmation/);

await expect(page.getByText('Order Confirmed')).toBeVisible();

await expect(page.getByTestId('order-number')).toBeVisible();

});

test('should show error for invalid payment', async ({ page }) => {

// ... setup steps similar to above ...

await page.fill('[data-testid="card-number"]', '4000000000000002'); // Declined card

await page.click('[data-testid="place-order"]');

await expect(page.getByText('Payment declined')).toBeVisible();

await expect(page).toHaveURL('/checkout'); // Should stay on checkout

});

});Key points to discuss:

- Use of Page Object Model for larger test suites

- Data-testid attributes for stable selectors

- Environment variables for sensitive data

- Explicit waits through Playwright’s auto-waiting

- Meaningful assertions at each step

- Error case coverage

Flaky tests undermine confidence in automation and waste engineering time. A comprehensive strategy addresses both immediate issues and systemic causes.

Immediate Triage:

- Quarantine the flaky test - Move to a separate suite that doesn’t block deployments

- Analyze failure patterns - Review screenshots, logs, and timing from recent failures

- Identify root cause - Timing issues, test data conflicts, environmental factors

Common Causes and Solutions:

Timing Issues:

- Add explicit waits for dynamic content

- Use framework-provided wait mechanisms (Playwright auto-waiting)

- Avoid arbitrary sleep() calls

Test Data Problems:

- Isolate test data per test run

- Use factory patterns to create test data

- Clean up data after tests complete

Environmental Issues:

- Ensure consistent test environments (Docker)

- Mock external services where appropriate

- Handle network variability gracefully

Test Design Issues:

- Make tests independent - no shared state between tests

- Use reliable selectors (data-testid, accessibility attributes)

- Keep tests focused on one behavior

Systemic Prevention:

- Monitor flaky test metrics over time

- Set thresholds: tests failing >5% of runs get investigated

- Regular maintenance sprints to address test debt

- Code review automation code as rigorously as production code

Retry Strategy (Last Resort): Implement smart retries with:

- Maximum retry count (usually 2-3)

- Logging when retries occur for later analysis

- Don’t retry tests that fail deterministically

API testing validates the business logic layer independent of UI, providing faster feedback and more stable tests.

Testing Strategy:

Functional Testing:

- Verify correct responses for valid requests

- Test error handling for invalid inputs

- Validate response schemas and data types

- Check business logic rules and calculations

Contract Testing:

- Verify API matches documented specification (OpenAPI/Swagger)

- Use tools like Pact for consumer-driven contracts

- Ensure backward compatibility for API changes

Security Testing:

- Authentication and authorization validation

- Input validation and injection prevention

- Rate limiting and throttling

- Sensitive data exposure checks

Performance Testing:

- Response time benchmarks

- Load testing with concurrent requests

- Stress testing to find breaking points

Testing Approach by HTTP Method:

GET requests:

- Valid resource returns 200 with correct data

- Non-existent resource returns 404

- Unauthorized access returns 401/403

- Query parameters filter correctly

POST requests:

- Valid payload creates resource (201)

- Invalid payload returns validation errors (400)

- Duplicate resources handled appropriately

- Response includes created resource or ID

PUT/PATCH requests:

- Updates modify correct fields

- Partial updates (PATCH) vs full replacement (PUT)

- Optimistic locking (ETags) if implemented

DELETE requests:

- Successful deletion returns 204 or 200

- Deleting non-existent resource is idempotent

- Cascading deletes handled correctly

Tools I Use:

- Postman for exploration and manual testing

- Playwright or REST Assured for automation

- k6 or JMeter for performance testing

- Pact for contract testing

Behavioral and Remote Work Questions

Use the STAR method (Situation, Task, Action, Result) to structure your answer:

Example Response:

Situation: “During regression testing for a major e-commerce release, I discovered that the checkout process was silently failing for orders over $10,000—it would accept the order but not process the payment or create the order record.”

Task: “I needed to assess the severity, communicate the issue effectively, and help the team make an informed decision about the release scheduled for the next morning.”

Action:

- “I immediately documented the bug with detailed reproduction steps and created a Loom video showing the issue”

- “I assessed impact: this affected approximately 5% of orders by volume but 30% of revenue”

- “I notified the tech lead and product manager with a clear severity assessment”

- “I investigated the root cause with the developer and found it was a recent change to payment processing logic”

- “I tested the proposed fix in our staging environment within 2 hours”

Result: “We delayed the release by 4 hours to properly fix and verify the issue. The fix went out with full regression testing. I also added automated tests for high-value orders to prevent similar issues. The bug would have cost an estimated $200K+ in lost orders had it reached production.”

Key Points to Emphasize:

- Clear, calm communication under pressure

- Quantifying business impact

- Proactive problem-solving

- Documentation and prevention

This question assesses your risk-based thinking and time management skills.

My Prioritization Framework:

1. Risk Assessment:

- Business impact: Revenue-affecting features get priority

- User exposure: Features affecting all users vs. subset

- Complexity: More complex features are higher risk

- Change scope: Larger changes need more testing

2. Coverage Strategy:

- Focus on critical paths first (happy path for core features)

- Then negative scenarios for highest-risk areas

- Then edge cases based on available time

3. Communication:

- Discuss priorities with product manager and tech lead

- Be transparent about what won’t be covered

- Document testing scope and limitations

Example from Experience:

“In a recent sprint, I had three features to test with two days remaining: a new payment method (high revenue impact), a profile settings change (low risk), and a search filter enhancement (medium usage).

I allocated:

- 60% time to payment method: full regression, edge cases, error handling

- 25% time to search filter: core functionality, basic edge cases

- 15% time to profile settings: smoke testing only

I communicated this plan to the team and documented that profile settings had limited coverage. The release went smoothly, and the payment feature had zero issues.”

Productivity Strategies:

Structured Work Environment:

- Dedicated workspace with minimal distractions

- Consistent daily routine with defined start/end times

- Time blocking for focused testing vs. meetings vs. communication

Self-Management Techniques:

- Daily planning: identify top 3 priorities each morning

- Pomodoro technique for focused testing sessions

- Regular breaks to maintain concentration

- End-of-day documentation of progress and blockers

Staying Connected:

Asynchronous Communication:

- Detailed written updates in Slack/Teams

- Thorough bug reports that don’t require follow-up questions

- Documentation that enables others to work independently

Synchronous Touchpoints:

- Daily standups for alignment

- Pair testing sessions with developers

- Regular 1:1s with manager

Building Relationships:

- Participate in virtual social events

- Use video for important conversations

- Share non-work interests in appropriate channels

- Recognize and appreciate teammates publicly

Tools I Rely On:

- Slack for quick communication

- Loom for async demos and explanations

- Notion for documentation

- Calendly for scheduling across time zones

This tests your communication skills and ability to handle conflict professionally.

Example Response:

Situation: “I found an issue where our date picker allowed users to select past dates when booking appointments—which should be impossible. The developer argued it was working as designed because the requirements didn’t explicitly prohibit it.”

Approach:

- Gathered evidence: I documented the user journey and how past date selection led to errors downstream

- Sought clarification: Checked with the product manager about intended behavior

- Focused on user impact: Instead of arguing about requirements interpretation, I showed the actual user experience issue

- Proposed solutions: Offered both a quick fix (frontend validation) and comprehensive fix (backend validation)

Resolution: “The product manager confirmed past dates should be blocked. More importantly, we established a process for flagging ambiguous requirements during planning rather than during testing. I also suggested adding acceptance criteria templates that included edge case considerations.”

Key Principles:

- Focus on user experience, not who’s right

- Bring data and evidence, not opinions

- Involve stakeholders when needed

- Suggest process improvements to prevent future conflicts

Effective communication with stakeholders requires translating technical details into business impact.

Metrics I Report:

For Executives:

- Release readiness: Go/No-Go with confidence level

- Critical bug count and trend over time

- Test coverage of high-risk features

- Escaped defects (bugs found in production)

For Product Managers:

- Feature-specific quality status

- Blocking issues and timeline impact

- User-facing bugs by severity

- Regression risk for related features

Communication Formats:

Sprint/Release Reports:

- Visual dashboards (charts over tables)

- Trend lines showing improvement or concerns

- Clear red/yellow/green status indicators

- Actionable recommendations

Status Updates:

- Lead with the headline: “Checkout is ready; Profile has 2 blockers”

- Quantify impact: “Affects ~5% of users”

- Provide timeline: “Fix expected by EOD Wednesday”

Risk Communication:

- Be direct about concerns without being alarmist

- Offer options: “We can release with known issue or delay 2 days”

- Support recommendations with data

Tools:

- TestRail/Zephyr for test execution metrics

- Jira dashboards for bug trends

- Custom Notion/Confluence pages for executive summaries

- Grafana for automation metrics

Shift-left testing moves quality activities earlier in the development cycle, catching issues when they’re cheaper to fix.

Implementation Strategies:

Requirements Phase:

- Participate in backlog refinement to identify testability issues

- Add acceptance criteria during story writing

- Create test scenarios before development begins

- Flag ambiguous requirements that will cause testing problems

Design Phase:

- Review technical designs for testability

- Identify integration points requiring contract testing

- Suggest feature flags for safe testing in production

- Plan test data requirements early

Development Phase:

- Pair with developers on test case design

- Provide test frameworks developers can use for unit tests

- Code review for testability

- Enable TDD/BDD practices

Developer Enablement:

- Create shared test utilities and helpers

- Document testing best practices

- Provide test environment access and documentation

- Offer test automation training

Measuring Success:

- Defect detection phase: Where are bugs found?

- Cost of quality: Less rework due to earlier detection

- Cycle time: Faster releases with fewer late-stage issues

- Developer satisfaction: Less “throw over the wall”

Cultural Change: Shift-left requires cultural change, not just process change:

- Quality is everyone’s responsibility messaging

- Celebrate developers writing good tests

- Remove blame culture around bugs

- Make testing easy through good tooling

Mobile testing introduces unique challenges beyond web testing.

Mobile-Specific Testing Areas:

Device and OS Fragmentation:

- Multiple screen sizes and resolutions

- Different Android manufacturers and skins

- iOS vs. Android behavior differences

- Various OS versions with different capabilities

Network Conditions:

- Test on 3G, 4G, 5G, and WiFi

- Offline functionality and sync

- Network transitions (WiFi to cellular)

- Poor connectivity handling

Device Resources:

- Memory constraints and leaks

- Battery consumption

- Storage space limitations

- CPU performance on older devices

Mobile-Specific Features:

- Push notifications

- Camera and gallery access

- Location services

- Biometric authentication

- Deep linking

Interruption Handling:

- Incoming calls during app use

- Switching to other apps

- Low battery warnings

- System notifications

Installation and Updates:

- Fresh install vs. upgrade paths

- App store submission requirements

- Force update mechanisms

- Data migration between versions

Testing Approaches:

Device Strategy:

- Prioritize devices by user analytics

- Mix of real devices and emulators

- Cloud device farms (BrowserStack, Sauce Labs)

Automation Tools:

- Appium for cross-platform

- XCUITest for iOS native

- Espresso for Android native

- Detox for React Native

Example Response:

Situation: “Our regression testing took 8 hours of manual effort each release, and we were releasing twice per week. This was unsustainable and causing burnout.”

Analysis:

- Mapped all 200+ regression test cases

- Identified 60% were stable and repetitive—good automation candidates

- Found 20% were rarely finding bugs—low value

- Remaining 20% were exploratory—keep manual

Actions:

- Prioritized 40 high-value, stable test cases for automation

- Built a Playwright framework with our most common patterns

- Integrated with GitHub Actions for PR validation

- Created a smoke suite for deployment verification

- Deprecated 15% of tests that hadn’t found bugs in 6+ months

Results:

- Reduced manual regression from 8 hours to 2 hours

- Automation runs on every PR (20+ times/day)

- Found 3 critical bugs that manual testing missed (timing-sensitive issues)

- Team morale improved significantly

- Able to release daily instead of twice weekly

Key Learnings:

- Start with highest ROI automation targets

- Don’t try to automate everything at once

- Invest in good framework design early

- Measure and communicate results

This is a common real-world challenge that tests your adaptability and communication skills.

My Approach:

1. Clarify What You Can:

- Ask specific questions to product/developers

- Document assumptions explicitly

- Identify what IS known vs. uncertain

2. Risk-Based Initial Testing:

- Focus on core functionality that’s unlikely to change

- Test fundamental user flows

- Defer edge case testing until requirements stabilize

3. Exploratory Testing:

- Use session-based testing to document findings

- Note behavior that might be intentional or a bug

- Capture questions for clarification

4. Flexible Test Design:

- Write tests that are easy to update

- Use data-driven approaches for likely-to-change values

- Document test cases with version/assumption notes

5. Continuous Clarification:

- Regular check-ins during development

- Share findings early to influence requirements

- Flag when observed behavior differs from expectations

6. Documentation:

- Track all clarifications and decisions

- Note what was tested vs. what was deferred

- Create “testing debt” items for incomplete coverage

Communication Example: “I’ve completed testing on the core checkout flow. However, the discount calculation rules weren’t specified, so I tested basic percentage discounts only. I’ve documented 5 questions about stacking discounts and expiration dates. Once those are clarified, I estimate 4 hours additional testing.”

Frequently Asked Questions

Frequently Asked Questions

Do I need to know how to code to become a QA Engineer?

Entry-level manual QA positions often don't require coding skills, making them accessible starting points. However, to advance your career and access higher-paying roles, programming knowledge becomes essential. QA Automation Engineers and SDETs need strong coding skills (typically Python, JavaScript, or Java). Even manual testers benefit from basic scripting for tasks like data generation or log analysis. If you're starting without coding experience, begin learning Python or JavaScript while working in manual QA—most engineers make this transition within 1-2 years.

What's the difference between a QA Engineer and an SDET?

QA Engineers focus on ensuring product quality through a mix of manual testing, test case design, and test automation. SDETs (Software Development Engineers in Test) are software developers who specialize in building test infrastructure, frameworks, and tools. SDETs typically have stronger programming skills, contribute to production code for testability improvements, and build custom testing solutions. Compensation reflects this: SDETs earn 15-25% more than QA Automation Engineers. Think of it as a spectrum—as your coding skills grow, you can transition from QA Engineer toward SDET.

Is manual testing becoming obsolete?

No, but its role is evolving. While automation handles repetitive regression testing more efficiently, skilled manual testers remain essential for exploratory testing, usability evaluation, edge case discovery, and testing features not yet stable enough to automate. The highest demand is for testers who can do both: design comprehensive manual test strategies AND implement automation. Pure manual testing roles are declining and pay less, so building automation skills is crucial for long-term career growth. The ideal is being a hybrid tester who knows when each approach is appropriate.

How do I transition from development to QA, or vice versa?

Developer to QA: Your coding skills are highly valued—you can move directly into SDET or QA Automation roles. Emphasize your understanding of code architecture, debugging skills, and ability to write testable code. Learning testing fundamentals (ISTQB concepts, test design techniques) fills the gap. QA to Developer: Build projects outside of work to demonstrate production coding ability. Your testing background is an asset—you understand edge cases and quality from the start. Consider transitioning through SDET roles, where you can build increasingly complex systems while leveraging your QA expertise.

What certifications are most valuable for remote QA roles?

ISTQB Foundation Level is the most widely recognized and helps with resume screening, especially for candidates without traditional CS backgrounds. For automation-focused roles, tool-specific certifications (Selenium, Appium) can help but matter less than demonstrated ability through GitHub projects. Cloud certifications (AWS, Azure) are increasingly valuable as testing moves to cloud environments. However, certifications alone won't land you a job—they're most valuable when combined with practical experience and a portfolio of testing work.

How long does it take to land a remote QA job?

For experienced QA engineers with automation skills, expect 4-8 weeks of active job searching. Entry-level candidates or those transitioning into QA may need 2-4 months. The timeline depends on your experience level, automation skills, location (for timezone compatibility), and the selectiveness of companies you're targeting. Remote-first companies often have longer interview processes (4-6 rounds) but faster feedback between rounds. Applying to 5-10 targeted positions per week, following up appropriately, and continuously improving your interview skills based on feedback will optimize your timeline.

Should I specialize in a specific type of testing?

Specialization can accelerate your career and increase earning potential, especially in: API testing (always in demand), performance testing (fewer qualified candidates), security testing (growing rapidly), or mobile testing (platform-specific expertise). However, early in your career, breadth is valuable—understanding multiple testing types makes you more versatile. The best approach is developing T-shaped skills: broad knowledge across testing domains with deep expertise in one area. Choose specialization based on interest and market demand—performance and security testing currently offer the best supply/demand dynamics.

What's the best programming language for test automation?

JavaScript/TypeScript leads in 2026 due to framework support (Playwright, Cypress) and frontend testing needs. Python is excellent for API testing, data validation, and teams using Python backends. Java remains dominant in enterprise environments with Selenium. The best choice depends on your target companies and products: web-focused companies often prefer JavaScript; enterprise and finance favor Java; startups and ML companies lean toward Python. If starting fresh, JavaScript/TypeScript offers the broadest opportunities and works with the most modern frameworks.

How do remote QA teams handle communication across time zones?

Successful remote QA teams rely heavily on asynchronous communication: detailed bug reports that don't require clarification, comprehensive test documentation, recorded Loom videos for complex issues, and well-organized knowledge bases. Synchronous overlap is reserved for critical issues and collaborative sessions. Teams typically establish core hours (usually 3-4 hours overlap) for meetings and real-time collaboration. As a remote QA engineer, you'll need strong written communication skills and the ability to work independently while staying connected with your team through async channels.

Can I work remotely for a US company from another country?

Yes, many US companies hire QA engineers globally, though it depends on the company's legal structure and your location. Remote-first companies like GitLab and Zapier hire across 50+ countries. Others use Employer of Record (EOR) services to hire internationally. Considerations include timezone overlap requirements (many prefer Americas or European timezones for collaboration), tax and legal implications in your country, and compensation adjustments (some companies pay location-agnostic rates, others adjust for local markets). Research each company's hiring policies and EOR partnerships.

What's the career ceiling for QA Engineers?

QA offers clear advancement paths to VP/Director levels ($200K-$300K+ compensation) and distinguished IC roles (Staff/Principal QA Engineers at $180K-$250K). The field has evolved significantly—today's QA leaders are technical executives influencing product strategy, not just test managers. Career growth options include: IC track (Senior → Staff → Principal → Distinguished), management track (Lead → Manager → Director → VP), or transitioning to adjacent roles (Engineering Management, Product Management, Developer Relations). The key to advancement is combining deep technical skills with business impact and leadership ability.

How do I build a QA portfolio without professional experience?

Create a GitHub repository showcasing test automation for open-source or personal projects. Write test cases for popular applications and document your findings. Contribute to open-source testing frameworks or tools. Participate in bug bounty programs to find real issues. Document your testing process through blog posts. Create sample test plans and strategy documents for hypothetical scenarios. Record Loom videos walking through your testing approach. These artifacts demonstrate your skills to employers and can be more valuable than formal experience for entry-level roles.

Related Guides and Next Steps

Pursuing a remote QA engineering career connects to several other important topics covered in our guides:

Essential Related Guides:

- Remote Engineering Jobs Hub - Overview of all remote engineering paths and how QA fits in

- Remote DevOps Engineer Jobs - CI/CD and infrastructure skills that complement QA

- Remote Interview Guide - Master the virtual interview process

Job Search Resources:

- Where to Find Remote Jobs - Top job boards and search strategies

- We Work Remotely - Largest remote-only job board with QA listings

- FlexJobs - Vetted remote positions including QA and testing roles

- Remote.co - Remote job listings with company reviews

- Remote Resume Guide - Optimize your resume for remote roles

- LinkedIn for Remote Jobs - Build your professional presence

Salary and Negotiation:

- Negotiating Remote Salary - Maximize your compensation

- Remote Benefits to Look For - Beyond salary considerations

Your QA Career Checklist

Remote QA Engineer Career Launch

- 1 Complete ISTQB Foundation certification (or begin studying)

Validates your testing fundamentals to employers

- 2 Build proficiency in one automation framework

Playwright or Cypress recommended for beginners

- 3 Create a testing portfolio on GitHub

Include automation projects, test plans, and documented bug findings

- 4 Learn API testing fundamentals with Postman

API testing skills are essential for all QA roles

- 5 Practice SQL for database testing

Ability to query databases separates strong candidates

- 6 Master async communication skills

Write clear bug reports, documentation, and status updates

- 7 Set up a professional home office

Reliable internet, quiet space, good video setup

- 8 Build your target company list

Research 20+ remote-friendly companies hiring QA

- 9 Prepare for technical interviews

Practice test design questions and coding challenges

- 10 Network in QA communities

Ministry of Testing, QA subreddits, LinkedIn groups

Start Your Remote QA Journey Today

Remote QA Engineering offers a unique combination of accessibility, growth potential, and work-life balance. Whether you’re entering the field without a technical background, transitioning from development, or advancing your existing QA career, the remote-first future of work is here.

The path is clear: build your testing fundamentals, develop automation skills progressively, demonstrate your abilities through portfolio projects, and target companies that value quality engineering. Remote QA teams are building the quality infrastructure that powers the world’s software—and they’re doing it from anywhere.

Your first step? Choose one area from the checklist above and start today. Whether it’s beginning ISTQB study, writing your first Playwright test, or updating your LinkedIn profile with QA-focused keywords, momentum builds with action.

The remote QA engineering career you want is achievable—and it starts now.

Get Remote QA Jobs Delivered Weekly

Curated remote QA engineer opportunities, salary insights, automation tips, and interview preparation delivered to your inbox.

Frequently Asked Questions

How do I find remote QA engineer jobs?

To find remote QA engineer jobs, start with specialized job boards like We Work Remotely, Remote OK, and TestGorilla's job board that list QA-specific remote positions. Search for titles including "QA Engineer," "SDET," "Test Automation Engineer," and "Quality Engineer" with remote filters. Companies like GitLab, Automattic, Toptal, and Deel actively hire remote QA engineers. Also check company career pages for test automation roles—many aren't listed on job boards.

What skills do I need for remote QA engineer positions?

Remote QA engineer positions require test automation skills (Selenium, Cypress, Playwright), API testing (Postman, REST Assured), and CI/CD pipeline knowledge. Programming proficiency in Python, JavaScript, or Java is essential for SDET roles. Remote-specific skills include strong written communication for async bug reporting, self-motivated test planning, and proficiency with tools like Jira, TestRail, and Slack. Experience with performance testing (JMeter, k6) and security testing basics are increasingly valued.

What salary can I expect as a remote QA engineer?

Remote QA engineer salaries in the US range from $70,000-$95,000 for entry-level, $95,000-$130,000 for mid-level, and $130,000-$170,000 for senior roles. SDET and test automation specialists command 10-20% premiums over manual QA roles. Some companies apply location-based pay adjustments, while others pay US market rates regardless of location. Check our salary comparison pages for region-specific ranges.

Are remote QA engineer jobs entry-level friendly?

Yes, QA engineering is one of the more entry-level-friendly remote tech roles. Start by learning test automation frameworks (Cypress or Playwright are beginner-friendly), contributing to open source test suites, and earning certifications like ISTQB Foundation. Many companies hire junior QA engineers remotely and provide mentorship. Freelance QA testing on platforms like Testlio or uTest can build experience and portfolio quickly.

Continue Reading

Remote Engineering Jobs 2026: Complete Guide to All Software Roles

The definitive hub for remote software engineering careers. Explore salary data, interview guides, and opportunities across frontend, backend, DevOps, ML, security, and more.

Remote Jobs for Software Engineers 2026: Complete Guide

Everything software engineers need to know about finding remote positions, from job search to negotiation.

Remote DevOps Engineer Jobs: Complete 2026 Career Guide

Everything you need to land a remote DevOps engineer job. CI/CD, infrastructure automation, cloud platforms - salary data, interview questions, and companies hiring in 2026.

Land Your Remote Job Faster

Get the latest remote job strategies, salary data, and insider tips delivered to your inbox.