Remote Data Engineer Jobs: Complete 2026 Career Guide

Everything you need to land a remote data engineer job. Data pipelines, warehouses, ETL - salary data, interview questions, and companies hiring.

Updated January 20, 2026 • Verified current for 2026

Remote data engineers design, build, and maintain the infrastructure that powers modern data-driven organizations. They earn between $85,000 and $240,000 (US remote), with senior and staff-level engineers at top companies commanding $300,000+ in total compensation. Data engineering is one of the fastest-growing remote roles in 2026, with demand increasing 35% year-over-year as companies invest heavily in data infrastructure, real-time analytics, and AI/ML pipelines.

The role is exceptionally well-suited for remote work because data engineering tasks are inherently asynchronous, measurable, and output-driven. Unlike roles requiring constant real-time collaboration, data engineers spend most of their time writing code, building pipelines, and optimizing systems independently. The work products (pipelines, data models, infrastructure) are tangible and reviewable, making it easy to demonstrate productivity without physical presence. Remote-first companies actively seek data engineers because the talent pool is limited and distributed hiring dramatically expands access to skilled professionals.

If you have strong SQL skills, Python or Scala programming experience, and an interest in building scalable data systems, remote data engineering offers one of the most lucrative and flexible career paths in technology today.

What Do Remote Data Engineers Actually Do?

Data engineers are the architects and builders of an organization’s data infrastructure. While data scientists analyze data and machine learning engineers build models, data engineers create the systems that make all of that work possible. They ensure data flows reliably from source systems to analytics platforms, arrives on time, and maintains quality throughout the journey.

Day-to-Day Responsibilities

A typical day for a remote data engineer involves a mix of building new systems and maintaining existing ones. The work is highly technical but requires strong collaboration skills to understand what data stakeholders need.

Building and maintaining data pipelines consumes the largest portion of most data engineers’ time. This includes designing ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform) processes that move data from source systems to destinations, writing Python or Scala code to transform raw data into usable formats, scheduling and orchestrating jobs using tools like Airflow, Prefect, or Dagster, and monitoring pipeline health with alerting for failures or data quality issues.

Data warehouse and lake management involves designing and optimizing the storage systems where analytics data lives. Data engineers choose appropriate storage formats (Parquet, Delta Lake, Iceberg), design schemas and partitioning strategies for query performance, manage costs by optimizing compute and storage utilization, and implement data retention and archival policies.

Collaborating with stakeholders means working closely with data scientists, analysts, and product teams to understand their data needs. Data engineers translate business requirements into technical specifications, review and optimize queries from analytics users, educate teams on data models and best practices, and participate in data governance and documentation efforts.

Infrastructure and tooling responsibilities include setting up and maintaining data infrastructure in cloud environments, implementing CI/CD for data pipelines, managing access controls and security, and evaluating and adopting new technologies as the data stack evolves.

Data Engineer vs Data Scientist vs Analytics Engineer

Understanding how data engineering relates to adjacent roles helps you position yourself correctly in job searches and career planning.

Data Engineers focus on the infrastructure and systems that move and store data. They write production code, manage databases, and ensure data is available and reliable. The role emphasizes software engineering skills applied to data problems. Data engineers typically don’t perform statistical analysis or build machine learning models, though they may deploy models built by others.

Data Scientists analyze data to generate insights and build predictive models. They work with data that engineers have prepared, applying statistical methods and machine learning algorithms. Data scientists need strong math and statistics backgrounds and typically use Python or R for analysis. While there’s overlap in tools (both use Python and SQL), the focus differs significantly: engineers build systems while scientists analyze data.

Analytics Engineers occupy the middle ground, focusing specifically on transforming data within the warehouse for business consumption. They use tools like dbt to build data models, create metrics and KPIs, and ensure data consistency across the organization. Analytics engineers work more closely with business stakeholders than traditional data engineers and often have stronger SQL skills than Python skills.

Machine Learning Engineers bridge data engineering and data science, focusing on deploying and scaling machine learning models in production. They need both software engineering skills (like data engineers) and ML knowledge (like data scientists). ML engineers often work closely with data engineers to build feature pipelines and model serving infrastructure.

Why Data Engineering Is Ideal for Remote Work

Several characteristics make data engineering particularly well-suited for distributed teams and remote-first companies.

Asynchronous work patterns dominate the role. Unlike frontend development where you might pair with designers frequently, or customer-facing roles requiring real-time availability, data engineering work happens largely independently. You write code, run pipelines, review results, and iterate. This naturally fits async communication styles where detailed documentation and written updates replace constant meetings.

Measurable outputs make demonstrating productivity straightforward. Pipelines either run successfully or they don’t. Data quality either meets standards or falls short. Query performance is benchmarked objectively. Remote work skeptics worry about productivity, but data engineering deliverables are inherently measurable, eliminating much of this concern.

Talent scarcity motivates companies to hire remotely. Experienced data engineers are difficult to find in any single geographic area. Companies competing for talent have discovered that remote hiring dramatically expands their candidate pool. This creates more opportunities for engineers who prefer remote work and increases leverage for salary negotiations.

Infrastructure-as-code practices align perfectly with remote collaboration. Modern data engineering relies heavily on version-controlled infrastructure definitions, automated deployments, and comprehensive documentation. These practices emerged from software engineering best practices and translate seamlessly to distributed teams.

Time zone advantages can actually benefit data engineering work. Pipelines often need monitoring and maintenance during off-hours. A globally distributed team can provide better coverage than a colocated team. Some companies deliberately hire data engineers across time zones to ensure someone is awake when critical pipelines run.

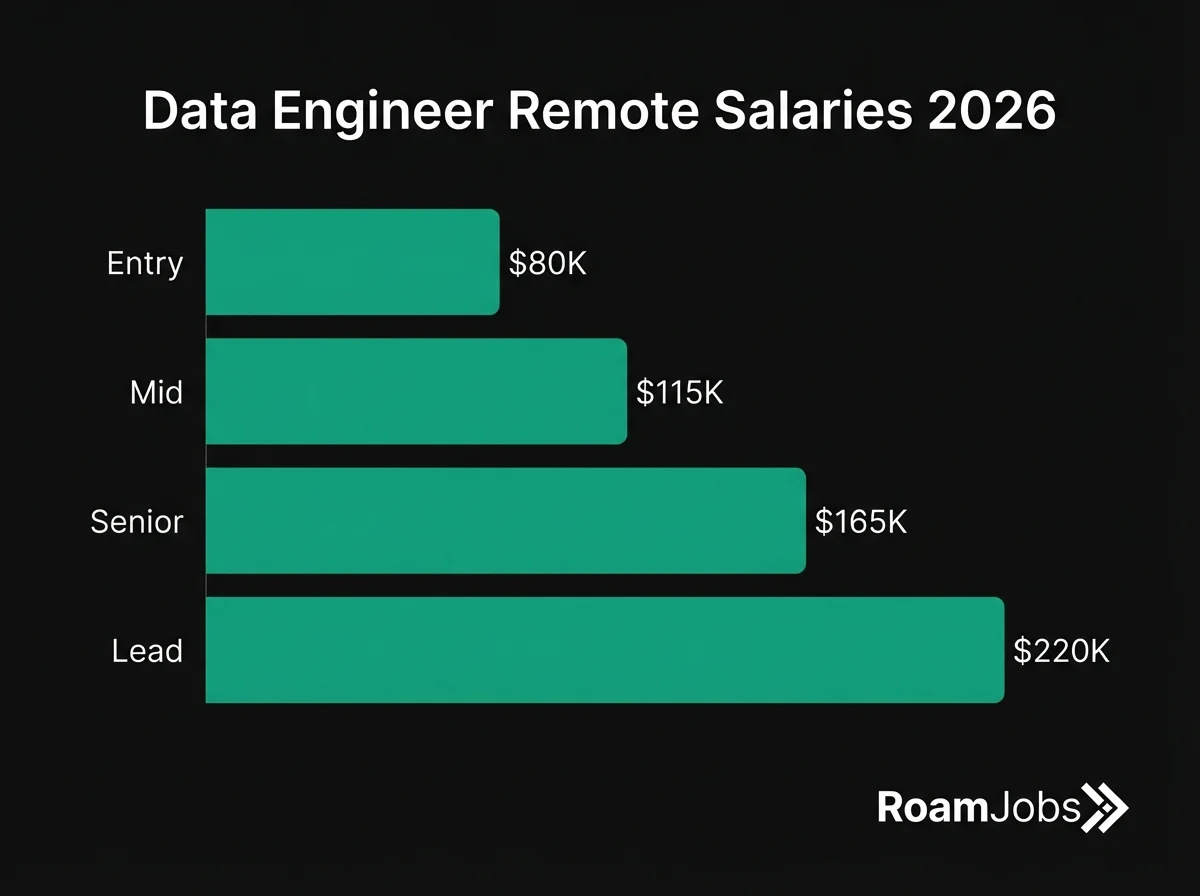

Salary Breakdown by Seniority Level

Data engineering compensation varies significantly by experience level, with substantial jumps at each career stage. The following breakdowns reflect US remote positions, which represent the most competitive segment of the market.

Data Engineer Salary by Experience & Location

| Level | 🇺🇸 US Remote | 🇪🇺 EU Remote | 🌎 LATAM | 🌏 Asia |

|---|---|---|---|---|

| Entry Level (0-2 yrs) | $85,000 - $110,000 | $50,000 - $75,000 | $35,000 - $60,000 | $25,000 - $50,000 |

| Mid-Level (2-5 yrs) | $120,000 - $170,000 | $75,000 - $115,000 | $55,000 - $90,000 | $45,000 - $80,000 |

| Senior (5-8 yrs) | $165,000 - $240,000 | $110,000 - $170,000 | $85,000 - $140,000 | $70,000 - $120,000 |

| Staff/Director (8+ yrs) | $210,000 - $320,000 | $150,000 - $240,000 | $120,000 - $190,000 | $100,000 - $170,000 |

* Salaries represent base compensation for remote positions. Actual compensation may vary based on company, experience, and specific location within region.

Entry Level / Junior Data Engineer

0-2 years experience

Breaking Into Data Engineering

Entry-level data engineering is more accessible than ever, though competition for fully remote positions remains intense. Many successful junior data engineers transition from adjacent roles like data analysis, backend development, or DevOps.

Essential skills for entry-level positions:

- Strong SQL proficiency including joins, aggregations, window functions, and CTEs

- Python programming with focus on data manipulation (pandas, basic scripting)

- Understanding of data warehousing concepts and dimensional modeling basics

- Familiarity with at least one cloud platform (AWS, GCP, or Azure)

- Version control with Git and basic CI/CD concepts

- Command line proficiency and basic Linux administration

What hiring managers look for in junior candidates:

- Demonstrated learning ability and curiosity about data systems

- Portfolio projects showing end-to-end data pipeline implementation

- Understanding of why data engineering matters to businesses

- Clear communication skills, especially in written documentation

- Eagerness to learn rather than claims of expertise beyond experience level

How to break into data engineering without prior experience:

- Build 2-3 substantial portfolio projects that showcase complete pipelines (source to destination)

- Contribute to open-source data tools like Apache Airflow, dbt, or Great Expectations

- Take on data engineering tasks in your current role, even if your title is different

- Complete hands-on courses from DataCamp, Coursera, or cloud provider training

- Document your projects thoroughly and share learnings on technical blogs

Common entry paths:

- Data analyst seeking more technical challenges

- Backend developer interested in data infrastructure

- DevOps engineer moving toward data-specific tooling

- Recent bootcamp graduate with strong fundamentals

- Self-taught engineer with impressive portfolio projects

Entry-level remote positions are competitive because they represent a desirable combination: good pay, flexible work, and career growth potential. Stand out by demonstrating real skills through projects rather than just listing technologies you’ve studied.

Mid-Level Data Engineer

2-5 years experience

Developing Expertise and Ownership

Mid-level data engineers have proven they can build and maintain production systems. At this stage, you’re expected to work independently, make sound technical decisions, and mentor junior team members.

Skills expected at the mid-level:

- Deep SQL expertise including query optimization and execution plan analysis

- Production Python development with testing, error handling, and logging

- Experience with major orchestration tools (Airflow, Prefect, or Dagster)

- Proficiency with at least one data warehouse (Snowflake, Databricks, BigQuery, or Redshift)

- Understanding of data modeling patterns (star schema, slowly changing dimensions)

- Streaming basics with Kafka, Kinesis, or similar technologies

- Infrastructure as code using Terraform, Pulumi, or cloud-native tools

- Strong debugging skills and systematic approach to troubleshooting

Typical responsibilities:

- Owning end-to-end delivery of data pipelines and infrastructure projects

- Designing data models in collaboration with analysts and scientists

- Improving pipeline reliability, performance, and cost efficiency

- Participating in on-call rotations for critical data systems

- Code reviewing junior engineers’ work and providing mentorship

- Contributing to technical documentation and best practices

Career development at this stage:

- Specialize in high-demand areas like streaming, ML infrastructure, or specific platforms

- Build cross-functional relationships with data scientists and product managers

- Take on increasingly complex projects with broader organizational impact

- Develop expertise in data governance, quality, and observability

- Consider whether you want to pursue individual contributor depth or management

What distinguishes top performers:

- Proactive identification and resolution of data quality issues

- Strong written communication that reduces back-and-forth

- Ability to balance technical excellence with business pragmatism

- Willingness to tackle unglamorous but important maintenance work

- Consistent delivery without requiring constant oversight

Mid-level is where many data engineers plateau. Breaking through requires intentional skill development beyond your daily work, taking ownership of larger initiatives, and building visibility through internal and external contributions.

Senior Data Engineer

5-8 years experience

Technical Leadership and Architecture

Senior data engineers shape the technical direction of data platforms and mentor entire teams. The role shifts from individual implementation to designing systems and enabling others to succeed.

Advanced skills expected:

- Deep expertise across the modern data stack (orchestration, warehousing, transformation, observability)

- System design skills for data platforms handling billions of events

- Performance optimization at scale: query tuning, partition strategies, cost management

- Advanced programming patterns: distributed systems, fault tolerance, exactly-once processing

- Security and compliance: access controls, encryption, audit logging, GDPR/CCPA

- Data mesh and data contracts understanding for decentralized architectures

- Evaluation and adoption of new technologies for the organization

- Cross-platform expertise spanning multiple cloud providers and tools

Strategic responsibilities:

- Designing data architecture for new initiatives and company growth

- Setting technical standards and best practices for the data team

- Driving major platform migrations or modernization efforts

- Collaborating with leadership on data strategy and roadmap

- Interviewing candidates and shaping team composition

- Representing data engineering in cross-functional planning

- Managing technical debt and platform reliability

What distinguishes senior from mid-level:

- Scope: senior engineers influence the entire data platform, not just individual pipelines

- Autonomy: given ambiguous problems and expected to define solutions

- Impact: work affects multiple teams and company-wide data capabilities

- Leadership: responsible for technical decisions and their consequences

- Communication: regularly presenting to non-technical stakeholders

Career considerations at the senior level:

- Decide between continuing on the IC track (staff, principal) or transitioning to management

- Build external reputation through conference talks, blog posts, or open-source contributions

- Develop expertise in emerging areas like real-time ML features, data mesh, or cost optimization

- Consider whether to specialize deeply or maintain breadth across the stack

- Evaluate opportunities at different company stages (startup vs. enterprise)

Senior data engineers at remote companies often have significant influence over technology choices and team culture. The combination of technical depth and communication skills required makes this a high-impact, high-compensation career stage.

Lead / Director Data Engineer

8+ years experience

Platform Strategy and Organizational Impact

Principal engineers, directors, and VPs of data engineering operate at the intersection of technology and business strategy. They’re accountable for data platform capabilities across the organization.

Strategic skills required:

- Multi-year technical roadmap development and execution

- Build vs. buy decisions for major data infrastructure investments

- Vendor evaluation and contract negotiation

- Budget management and cost optimization at scale

- Organizational design for data engineering teams

- Cross-functional leadership with product, engineering, and analytics leaders

- Industry knowledge of data platform trends and competitive landscape

- Executive communication translating technical concepts for business audiences

Typical responsibilities:

- Defining data platform vision aligned with company strategy

- Managing teams of 10-50+ engineers directly or through managers

- Setting technical standards that scale across the organization

- Driving reliability and cost targets for data infrastructure

- Representing data engineering to the executive team and board

- Recruiting and retaining top data engineering talent

- Building relationships with key vendors and partners

- Ensuring compliance with data regulations and security standards

Career paths at this level:

- VP of Data Engineering or Data Platform

- Chief Data Officer (with broader data governance scope)

- CTO at data-focused companies

- Founding data/platform leader at high-growth startups

- Principal/Distinguished Engineer (deep IC track)

- Transition to venture capital or advisory roles

What companies seek at this level:

- Track record of building and scaling data platforms

- Experience managing through growth (10x data volume, team size)

- Ability to balance technical excellence with business pragmatism

- Strong network in the data engineering community

- Previous experience at well-known data-forward companies

- Thought leadership through writing, speaking, or open-source contributions

Director-plus roles at fully remote companies are less common but growing. Companies like Snowflake, Databricks, GitLab, and various well-funded startups hire remote data leadership. These positions often require some travel for team building and executive alignment, even at remote-first organizations.

Technical Skills and Tools Deep Dive

Data engineering requires proficiency across a broad technology stack. The modern data platform combines multiple specialized tools, and knowing when to use each one separates effective engineers from those who just know the syntax.

Data Warehouses: Choosing the Right Platform

The data warehouse is the heart of most analytics infrastructures. Each major platform has distinct strengths, and companies increasingly use multiple platforms for different use cases.

Data Warehouse Comparison

Source: RoamJobs 2026 Technology Survey| Platform | Best For | Pricing Model | Remote Job Demand | Learning Curve |

|---|---|---|---|---|

| Snowflake | Large enterprises, data sharing | Compute + storage (separated) | Very High | Medium |

| Databricks | ML workloads, streaming | Compute (DBUs) | Very High | High |

| BigQuery | Google Cloud shops, serverless | Queries (bytes scanned) | High | Low |

| Redshift | AWS-native workloads | Instance-based or serverless | Medium | Medium |

| DuckDB | Local analytics, embedded | Free / open source | Growing | Low |

Data compiled from RoamJobs 2026 Technology Survey. Last verified January 2026.

Snowflake dominates enterprise data warehousing with its separation of compute and storage, near-unlimited concurrency, and data sharing capabilities. Learning Snowflake is highly valuable for remote job seekers because the platform’s popularity means abundant opportunities. Key Snowflake skills include understanding virtual warehouses and scaling, working with semi-structured data (JSON, Parquet), implementing time travel and data retention, managing Snowpipe for continuous ingestion, and optimizing for cost efficiency.

Databricks has emerged as the leading platform for organizations combining analytics with machine learning. Built on Apache Spark, Databricks excels at large-scale data processing, Python-based workflows, and the lakehouse architecture. Critical Databricks skills include Spark SQL and PySpark development, Delta Lake for reliable data lakes, Unity Catalog for governance, MLflow for model lifecycle management, and cluster management and optimization.

BigQuery offers a truly serverless experience that appeals to organizations prioritizing simplicity over fine-grained control. As part of Google Cloud, it integrates well with other GCP services. Important BigQuery skills include partitioning and clustering strategies, managing costs through query optimization, using BigQuery ML for in-database machine learning, working with nested and repeated fields, and integrating with Dataflow and other GCP services.

Redshift remains common in AWS-native organizations, particularly those who adopted cloud warehousing early. While facing competition from Snowflake and Databricks, Redshift’s tight AWS integration keeps it relevant. Key Redshift knowledge includes distribution keys and sort keys for performance, Redshift Spectrum for querying S3 data, RA3 node architecture and managed storage, and migration patterns to/from other platforms.

Pipeline Orchestration: Airflow vs Prefect vs Dagster

Orchestration tools schedule and coordinate data pipeline execution. The choice significantly impacts developer experience and operational complexity.

Orchestration Tool Comparison

Source: RoamJobs 2026 Technology Survey| Tool | Architecture | Best For | Remote Job Demand | Learning Curve |

|---|---|---|---|---|

| Apache Airflow | DAG-based, Python | Complex dependencies, mature orgs | Very High | Medium-High |

| Prefect | Task-based, Python | Modern data teams, hybrid cloud | Growing | Low-Medium |

| Dagster | Asset-based, Python | Software engineering practices | Growing | Medium |

| Mage | Notebook-style, Python | Rapid development, smaller teams | Emerging | Low |

| dbt Cloud | SQL-based, managed | Transformation-only workflows | High | Low |

Data compiled from RoamJobs 2026 Technology Survey. Last verified January 2026.

Apache Airflow remains the industry standard with the largest job market. Originally developed at Airbnb, Airflow uses directed acyclic graphs (DAGs) to define pipeline dependencies. Essential Airflow skills include writing DAGs with proper task dependencies, using operators for different systems (SQL, Python, cloud services), implementing sensors for waiting on external conditions, managing connections and variables securely, debugging failed tasks and understanding retries, and deployment options (MWAA, Cloud Composer, Astronomer, self-hosted).

Prefect takes a more Pythonic approach, treating workflows as regular Python functions with added orchestration capabilities. Prefect emphasizes developer experience with features like automatic retries, caching, and a modern UI. Prefect is gaining adoption at modern data teams and is worth learning alongside Airflow.

Dagster introduces software engineering practices to data pipelines with strong typing, testing utilities, and an asset-centric model. Rather than thinking about tasks that run, Dagster encourages thinking about data assets that get produced. This approach resonates with teams that value code quality and maintainability.

The Modern Data Stack: dbt and Beyond

The modern data stack (MDS) represents a collection of best-in-class tools that work together to enable analytics. Understanding this ecosystem is crucial for remote data engineering roles.

dbt (data build tool) has become essential for data transformation. dbt enables SQL-based transformations version-controlled in Git, data modeling with reusable components (macros, packages), testing and documentation as part of the development workflow, and lineage tracking showing how data flows through transformations. Most remote data engineering jobs either require dbt experience or list it as strongly preferred. Even if your target role doesn’t use dbt, understanding its concepts will help you discuss modern data practices in interviews.

Data quality and observability tools have emerged as critical infrastructure. Products like Great Expectations, Monte Carlo, and Bigeye help teams detect data quality issues before they impact downstream users. Understanding data quality concepts including freshness, volume, schema changes, and distribution drift is increasingly expected.

Reverse ETL tools like Census, Hightouch, and Polytomic sync data from warehouses back to operational systems. This enables use cases like sending customer segments to marketing tools or syncing analytics data to CRMs. While not always a core data engineering responsibility, familiarity helps you understand the full data lifecycle.

SQL: The Foundation of Everything

SQL appears in virtually every data engineering job description. While the basics are straightforward, interview questions test advanced concepts that separate junior from senior engineers.

Advanced SQL concepts every data engineer must master:

- Window functions (ROW_NUMBER, RANK, LAG, LEAD, SUM OVER, running totals)

- Common Table Expressions (CTEs) for readable, maintainable queries

- Recursive CTEs for hierarchical data

- Query optimization and execution plan analysis

- Indexing strategies and when they help or hurt

- Handling NULL values correctly in different contexts

- Date/time manipulation across time zones

- Semi-structured data querying (JSON, arrays)

SQL interview preparation:

- Practice 100+ problems on LeetCode, HackerRank, or DataLemur

- Focus on medium and hard difficulty problems

- Practice explaining your approach verbally while solving

- Review platform-specific SQL features for your target companies

Python for Data Engineering

Python is the lingua franca of data engineering. While some teams use Scala or Java for Spark workloads, Python proficiency is nearly universal.

Essential Python skills:

- Core language features: data structures, comprehensions, generators, decorators

- pandas for data manipulation (though often replaced by Polars or Spark at scale)

- File handling: CSV, JSON, Parquet, Avro

- API interaction: requests library, authentication, pagination

- Database connectivity: psycopg2, SQLAlchemy, cloud SDK connectors

- Error handling and logging best practices

- Testing with pytest and mocking external dependencies

- Type hints for maintainable code

Spark with Python (PySpark):

- DataFrame API for distributed data processing

- Spark SQL for querying large datasets

- Optimizations: partitioning, caching, broadcast joins

- Debugging and monitoring Spark applications

- Delta Lake integration for reliable data lakes

Big Data Processing: Spark and Alternatives

Apache Spark remains dominant for large-scale data processing, but alternatives are emerging for specific use cases.

Spark expertise includes:

- Understanding the distributed computing model (driver, executors, partitions)

- Optimizing for performance (shuffle reduction, partition sizing)

- Debugging out-of-memory errors and skewed data

- Integrating with data lakes (Delta, Iceberg, Hudi)

- Streaming with Structured Streaming

Emerging alternatives:

- Polars: Lightning-fast DataFrame library for medium-sized data

- DuckDB: Embedded analytical database, great for local development

- Apache Flink: Superior for complex streaming use cases

- Trino: Federated queries across multiple data sources

Companies Actively Hiring Remote Data Engineers

The remote data engineering job market spans companies at every stage, from startups building their first data platforms to enterprises modernizing legacy systems.

Data Platform Companies

These companies build the tools data engineers use, making them excellent places to deepen expertise.

Snowflake - The data cloud company hires extensively for engineering roles. Remote positions available across the US and internationally. Compensation is highly competitive with strong equity packages. Data engineers at Snowflake build internal tooling, work on the product itself, and support enterprise customers.

Databricks - The lakehouse pioneer maintains a distributed workforce. Strong focus on Spark, Delta Lake, and ML infrastructure. Engineering culture emphasizes innovation and open-source contribution. Compensation matches top-tier tech companies.

dbt Labs - The company behind dbt (data build tool) is fully remote and hires data engineers for internal infrastructure and product development. If you love dbt, working here means shaping the tool’s future.

Airbyte - Open-source data integration platform. Fully remote team building the future of data movement. Startup compensation with equity upside potential.

Fivetran - Leader in managed data pipelines. Remote-friendly with emphasis on reliability engineering. Works with thousands of companies’ most critical data systems.

Monte Carlo - Data observability platform. Remote-first company tackling data quality at scale. Growing team with startup energy and enterprise customers.

Remote-First Tech Companies

These companies have embraced distributed work and actively build data platforms to power their products.

GitLab - The gold standard for remote work operates a sophisticated data platform. Data engineers support analytics, product, and ML teams. Exceptional documentation culture and transparent compensation.

Zapier - Workflow automation platform with a fully distributed team. Data engineering supports both internal analytics and product features. Known for excellent work-life balance.

Automattic - The company behind WordPress.com, WooCommerce, and Tumblr. Distributed since founding with data engineers across global time zones. Unique asynchronous culture.

Stripe - Financial infrastructure for the internet. Remote-first with data engineers building platforms for fraud detection, analytics, and ML. Top-tier compensation and challenging problems.

Coinbase - Cryptocurrency exchange with remote-first engineering. Data engineering supports trading analytics, compliance, and product features. High compensation with crypto-native culture.

Elastic - Company behind Elasticsearch. Distributed-first organization with data engineering opportunities in platform and analytics.

Companies Building Modern Data Stacks

These organizations are known for innovative data practices and often blog about their architectures.

Netflix - While not fully remote, Netflix has flexible policies and hires exceptional data engineers. Industry-leading data platform work with significant open-source contributions.

Airbnb - The company that created Apache Airflow continues to innovate in data infrastructure. Hybrid work model with strong data engineering culture.

Spotify - Music streaming giant with sophisticated data and ML platforms. Remote-friendly with extensive data engineering teams supporting recommendations, analytics, and creator tools.

Uber - Massive scale data challenges across rides, delivery, and freight. Data engineering roles span real-time systems, analytics, and ML infrastructure.

Shopify - E-commerce platform with “digital by default” policy. Data engineers support merchant analytics, internal tools, and ML features.

Pinterest - Visual discovery platform with interesting data challenges. Remote-friendly with data engineering supporting recommendations and advertising.

Startups and Scale-ups

Smaller companies offer opportunities to build data platforms from scratch and have outsized impact.

Hex - Collaborative data workspace. Small team building innovative analytics tooling. Fully remote.

Census - Reverse ETL pioneer. Remote-first with data engineers building integration infrastructure.

Dagster Labs - Company behind Dagster orchestrator. Building the future of data orchestration with distributed team.

Preset - Managed Apache Superset. Open-source BI with cloud offering. Remote-first data engineering team.

Hightouch - Reverse ETL and customer data platform. Remote team building data activation tools.

Modal - Serverless infrastructure for data and ML. Small, elite team with interesting technical challenges.

Interview Deep Dive: 20+ Questions with Answers

Data engineering interviews typically include SQL challenges, system design discussions, and questions about pipeline development. The following questions represent what you’ll encounter at top remote companies.

SQL Interview Questions

This question tests window functions and handling ties. The key insight is using DENSE_RANK rather than ROW_NUMBER to include ties.

WITH ranked_products AS (

SELECT

category_id,

product_id,

product_name,

SUM(quantity * price) as revenue,

DENSE_RANK() OVER (

PARTITION BY category_id

ORDER BY SUM(quantity * price) DESC

) as revenue_rank

FROM orders o

JOIN products p ON o.product_id = p.id

GROUP BY category_id, product_id, product_name

)

SELECT

category_id,

product_id,

product_name,

revenue

FROM ranked_products

WHERE revenue_rank <= 3

ORDER BY category_id, revenue_rank;Key points to mention:

- DENSE_RANK vs ROW_NUMBER vs RANK and when to use each

- GROUP BY happens before window functions in query execution

- Handling the case where a category has fewer than 3 products

This tests date manipulation, LAG window function, and percentage calculations.

WITH monthly_revenue AS (

SELECT

DATE_TRUNC('month', order_date) as month,

SUM(amount) as revenue

FROM orders

WHERE order_date >= DATE_TRUNC('month', CURRENT_DATE) - INTERVAL '12 months'

GROUP BY DATE_TRUNC('month', order_date)

),

with_previous AS (

SELECT

month,

revenue,

LAG(revenue) OVER (ORDER BY month) as prev_month_revenue

FROM monthly_revenue

)

SELECT

month,

revenue,

prev_month_revenue,

ROUND(

((revenue - prev_month_revenue) / NULLIF(prev_month_revenue, 0)) * 100,

2

) as growth_rate_pct

FROM with_previous

ORDER BY month;Key points:

- Use NULLIF to prevent division by zero

- DATE_TRUNC for consistent month grouping

- LAG with proper ordering for previous period comparison

This classic interview question tests understanding of gaps-and-islands problems.

WITH purchase_dates AS (

SELECT DISTINCT

user_id,

DATE(order_date) as purchase_date

FROM orders

),

with_groups AS (

SELECT

user_id,

purchase_date,

purchase_date - (ROW_NUMBER() OVER (

PARTITION BY user_id

ORDER BY purchase_date

))::int as date_group

FROM purchase_dates

),

consecutive_counts AS (

SELECT

user_id,

date_group,

COUNT(*) as consecutive_days,

MIN(purchase_date) as start_date,

MAX(purchase_date) as end_date

FROM with_groups

GROUP BY user_id, date_group

)

SELECT DISTINCT user_id

FROM consecutive_counts

WHERE consecutive_days >= 3;Explain the approach:

- First deduplicate to one row per user per day

- Subtract row number from date to create groups (consecutive dates get same group)

- Count within groups to find streak lengths

- This pattern applies to many consecutive-event problems

Tests self-joins, timestamp arithmetic, and thinking about data quality.

SELECT

t1.transaction_id as original_id,

t2.transaction_id as potential_duplicate_id,

t1.user_id,

t1.amount,

t1.transaction_time as original_time,

t2.transaction_time as duplicate_time,

EXTRACT(EPOCH FROM (t2.transaction_time - t1.transaction_time)) / 60 as minutes_apart

FROM transactions t1

JOIN transactions t2 ON

t1.user_id = t2.user_id

AND t1.amount = t2.amount

AND t1.transaction_id < t2.transaction_id

AND t2.transaction_time BETWEEN t1.transaction_time

AND t1.transaction_time + INTERVAL '5 minutes'

ORDER BY t1.user_id, t1.transaction_time;Discussion points:

- Using t1.id < t2.id prevents counting pairs twice

- Consider what fields constitute a “duplicate” for the business

- In production, you might use window functions for better performance

Pipeline Design Questions

Good answer structure:

-

Clarifying questions to ask:

- What’s the latency requirement? (real-time vs batch)

- What’s the event schema? Does it change frequently?

- Who are the downstream consumers?

- What’s the budget for infrastructure?

-

High-level architecture:

- Ingestion: Mobile app sends events to API Gateway, which writes to Kafka/Kinesis for durability

- Processing: Spark or Flink job reads from streaming queue, validates schema, transforms, and writes to staging

- Loading: ELT pattern where raw data lands in warehouse, dbt transforms into analytics models

- Quality: Great Expectations or similar for data quality checks

- Orchestration: Airflow or Dagster schedules batch jobs

-

Key considerations:

- Schema evolution: Use Avro/Protobuf with schema registry

- Late-arriving data: Design for reprocessing windows

- Idempotency: Use event IDs to handle duplicates

- Monitoring: Pipeline lag, row counts, schema drift alerts

-

Scale considerations for 10M events:

- At 10M daily = ~115 events/second average, 500+ peak

- Kafka handles this easily; single Spark job sufficient

- Warehouse partitioned by date for efficient queries

Strong answer covers:

Types of SCDs:

- Type 1: Overwrite old values. Simple but loses history. Use for truly corrective changes.

- Type 2: Add new row with version tracking. Most common for tracking history. Requires surrogate keys and effective dates.

- Type 3: Add columns for previous values. Limited history but simple queries.

Type 2 Implementation Example:

-- Customer dimension with Type 2 SCD

CREATE TABLE dim_customer (

customer_sk INT PRIMARY KEY, -- Surrogate key

customer_id INT, -- Natural key

name VARCHAR(100),

address VARCHAR(200),

segment VARCHAR(50),

effective_date DATE,

end_date DATE,

is_current BOOLEAN

);

-- Merge logic (simplified)

MERGE INTO dim_customer target

USING staged_customers source

ON target.customer_id = source.customer_id AND target.is_current = true

WHEN MATCHED AND (target.address != source.address OR target.segment != source.segment) THEN

UPDATE SET end_date = CURRENT_DATE - 1, is_current = false

WHEN NOT MATCHED THEN

INSERT (customer_id, name, address, segment, effective_date, end_date, is_current)

VALUES (source.customer_id, source.name, source.address, source.segment, CURRENT_DATE, '9999-12-31', true);Implementation with dbt: dbt snapshots handle Type 2 SCDs declaratively, which is the modern approach most teams use.

Systematic debugging approach:

-

Initial triage (5-10 minutes):

- Check if it’s still running or failed

- Look at resource utilization (CPU, memory, I/O)

- Check for obvious errors in logs

- Compare metrics to previous runs

-

Identify the slow stage:

- Pipeline monitoring showing which tasks took longer

- In Spark: check Spark UI for stage durations

- In SQL: identify slow queries via query history

-

Common root causes to investigate:

- Data volume spike: Source table grew unexpectedly

- Data skew: One partition has disproportionate data

- Missing statistics: Query planner making poor choices

- Resource contention: Other jobs competing for cluster

- Schema change: New column types affecting performance

- External dependency: Slow API or database

-

Immediate mitigations:

- Scale up compute if bottleneck is resources

- Add partitioning or filtering if data volume issue

- Repartition if seeing data skew

- Kill competing jobs if resource contention

-

Long-term fixes:

- Set up alerting on runtime anomalies

- Implement data volume checks before processing

- Add circuit breakers for external dependencies

- Regular performance testing on expected data growth

Architecture approach:

-

Requirements clarification:

- Latency: Fraud decisions needed in under 100ms during transaction

- Scale: Thousands of transactions per second

- Accuracy: Balance false positives (blocking legitimate) vs false negatives (missing fraud)

-

Architecture:

Real-time path:

- Transaction event hits Kafka topic

- Flink job enriches with user history (from Redis/feature store)

- ML model scores fraud probability

- Decision published to response topic

- Latency: ~50ms end-to-end

Batch path:

- Full transaction history in data warehouse

- Daily model retraining on labeled data

- Feature engineering pipeline updates feature store

- Model performance monitoring

-

Key components:

- Feature store: Precomputed features (user’s 30-day transaction count, average amount, etc.)

- ML model serving: Low-latency inference (TensorFlow Serving, SageMaker endpoint)

- Decision service: Business rules + ML score combination

- Feedback loop: Chargebacks and manual reviews feed back to training

-

Data engineering considerations:

- Exactly-once processing for transaction events

- Feature freshness vs computation cost tradeoffs

- Handling model version transitions without disruption

- Audit trail for regulatory compliance

Data Modeling Questions

Star Schema:

- Denormalized dimension tables connect directly to fact table

- Looks like a star with fact table in center

- Simpler queries with fewer joins

- May have redundant data in dimensions

- Better query performance for analytics workloads

Snowflake Schema:

- Normalized dimension tables with sub-dimensions

- Looks like snowflake with multiple levels

- More complex queries requiring more joins

- Less storage due to normalization

- Better for maintaining data consistency

When to use Star:

- Analytics and BI workloads prioritizing query speed

- When dimension tables are relatively small

- When query simplicity matters for analysts

- Modern columnar warehouses (Snowflake, BigQuery) where join cost is low

When to use Snowflake:

- When dimension table maintenance is complex

- When storage costs are a major concern

- When data updates frequently and consistency is critical

- Legacy systems where normalization patterns are established

Modern reality: Most teams use star schemas or even wider “one big table” approaches because modern warehouses optimize for this pattern and storage is cheap. The snowflake schema is becoming less common in new architectures.

Bridge table approach (recommended):

-- Fact table

CREATE TABLE fact_orders (

order_id INT,

customer_id INT,

order_date DATE,

total_amount DECIMAL(10,2)

);

-- Bridge table for many-to-many

CREATE TABLE bridge_order_promotion (

order_id INT,

promotion_id INT,

discount_amount DECIMAL(10,2),

PRIMARY KEY (order_id, promotion_id)

);

-- Dimension table

CREATE TABLE dim_promotion (

promotion_id INT PRIMARY KEY,

promotion_name VARCHAR(100),

promotion_type VARCHAR(50),

start_date DATE,

end_date DATE

);Query patterns:

-- Orders with their promotions

SELECT

o.order_id,

o.total_amount,

p.promotion_name,

b.discount_amount

FROM fact_orders o

LEFT JOIN bridge_order_promotion b ON o.order_id = b.order_id

LEFT JOIN dim_promotion p ON b.promotion_id = p.promotion_id;

-- Promotion performance

SELECT

p.promotion_name,

COUNT(DISTINCT b.order_id) as orders_using,

SUM(b.discount_amount) as total_discount_given

FROM dim_promotion p

JOIN bridge_order_promotion b ON p.promotion_id = b.promotion_id

GROUP BY p.promotion_name;Alternative: Array/JSON in modern warehouses: Some teams store promotion IDs as arrays in the fact table, using UNNEST for analysis. This is simpler but can complicate some queries.

Core entities to model:

- dim_customer: Customer attributes, acquisition channel, company size

- dim_subscription_plan: Plan tiers, pricing, features

- dim_date: Standard date dimension

- fact_subscription: Current and historical subscription states

- fact_usage: Daily/hourly product usage events

- fact_invoice: Billing events

Key SaaS metrics supported:

- MRR/ARR with proper handling of upgrades, downgrades, churn

- Customer lifecycle (trial, active, churned, reactivated)

- Usage patterns for expansion/churn prediction

- Cohort analysis for retention

Subscription fact table design:

CREATE TABLE fact_subscription (

subscription_sk INT PRIMARY KEY,

customer_id INT,

subscription_id INT,

plan_id INT,

status VARCHAR(20), -- trial, active, paused, cancelled

start_date DATE,

end_date DATE,

mrr DECIMAL(10,2),

mrr_change DECIMAL(10,2), -- For tracking expansions/contractions

change_type VARCHAR(20), -- new, expansion, contraction, churn, reactivation

effective_date DATE,

is_current BOOLEAN

);Important considerations:

- Track MRR movements, not just current state

- Handle trial conversions carefully

- Account for annual vs monthly billing

- Support multiple subscriptions per customer

- Enable point-in-time reporting (“What was MRR on date X?”)

Behavioral and Remote Work Questions

STAR format answer structure:

Situation: “We needed to choose a streaming platform for real-time event processing. The decision would be difficult to reverse, but we had only two weeks before project kick-off.”

Task: “As the senior data engineer, I owned the recommendation. Stakeholders included the ML team (low latency needs), analytics (data warehouse integration), and infrastructure (operational burden).”

Action:

- “Narrowed options to Kafka and Kinesis based on initial requirements”

- “Built proof-of-concept with each over one week”

- “Documented tradeoffs: Kinesis simpler ops but Kafka better ecosystem”

- “Met with each stakeholder to understand their priorities”

- “Made recommendation with explicit assumptions and fallback plan”

Result: “Chose Kafka with managed service (Confluent) to balance flexibility and operations. Two years later, handling 10x initial volume. The decision proved correct, and more importantly, the documented decision process helped when we evaluated alternatives for a different use case.”

Key points to emphasize:

- Systematic evaluation process despite time pressure

- Stakeholder alignment and communication

- Documented reasoning for future reference

- Pragmatic tradeoffs rather than perfect answer

Strong answer covers:

Why documentation matters even more remotely:

- Can’t tap someone’s shoulder to ask questions

- Time zone differences mean async handoffs

- Onboarding new team members without shadowing

- Debugging at 2 AM when pipeline owner is asleep

What I document for every pipeline:

- README: What it does, why it exists, who owns it

- Data contracts: Input/output schemas with expectations

- Runbook: How to restart, common failure modes, escalation path

- Architecture diagram: Visual representation of data flow

- Code comments: Why, not just what (especially for complex logic)

Documentation practices:

- Treat documentation as part of the PR, not afterthought

- Regular documentation review during team syncs

- Use tools that keep docs close to code (dbt docs, Sphinx)

- Automated schema documentation where possible

Example: “At my previous company, I introduced a template for pipeline documentation that became standard. It reduced on-call incidents by 30% because engineers could self-serve instead of paging the owner.”

Framework for prioritization:

-

Categorize technical debt:

- Critical: Causing incidents, data quality issues, security risks

- Productivity: Slowing down team velocity

- Maintenance: Will become worse if ignored

-

Quantify impact where possible:

- Hours spent on workarounds per week

- Incident frequency and resolution time

- Developer frustration (team surveys)

-

Balance approach:

- Allocate consistent percentage to tech debt (e.g., 20% of sprint)

- Critical debt gets immediate attention regardless

- Tie debt paydown to new features when possible

-

Communication:

- Make tech debt visible to leadership

- Explain business impact in business terms

- Propose concrete improvements, not vague cleanup

Example: “Our pipeline failure rate was 15%, requiring significant on-call time. I proposed a ‘reliability sprint’ focused on adding retries, better error handling, and monitoring. After investment, failure rate dropped to 2%, freeing up engineering time for features. Leadership appreciated the quantified improvement.”

Systematic investigation approach:

-

Gather specifics (don’t assume):

- Which table/column is wrong?

- What values do they expect vs see?

- When did they notice the discrepancy?

- Which source system are they comparing to?

-

Verify the discrepancy:

- Check source system directly (not just their query)

- Confirm we’re comparing apples to apples (same time range, filters)

- Sometimes the “discrepancy” is actually correct

-

Trace the data lineage:

- Query source system directly

- Check staging tables before transformation

- Review transformation logic for filtering/aggregation

- Compare row counts at each stage

-

Common root causes:

- Timing: Source updated after pipeline ran

- Filtering: Pipeline excludes records intentionally

- Transformation: Logic bug or changed business rules

- Definition mismatch: “Active users” means different things

-

Resolution and prevention:

- Fix immediate issue

- Add data quality check to catch future discrepancies

- Document data definitions clearly

- Set up reconciliation process for critical metrics

Key point: Stay collaborative, not defensive. The goal is accurate data, not being right about whether there’s a bug.

Honest, practical answer:

Productivity strategies:

- Start day with top 3 priorities written down

- Time block for deep work (pipeline development, code review)

- Batch Slack/email into specific times rather than constant checking

- Use async communication by default; meetings for complex discussions only

Work-life boundaries:

- Dedicated workspace separate from living areas

- Consistent start and end times most days

- Calendar blocking for lunch and personal time

- Team agreements on expected response times

Staying connected:

- Regular 1:1s with manager and teammates

- Participate actively in team channels (not just lurking)

- Virtual coffee chats with colleagues I don’t work with directly

- Camera on for important meetings to maintain human connection

Challenges I’ve overcome:

- Early career: overworked because “always available”

- Solution: explicit working hours in Slack status, team norms document

- Now: more productive in fewer hours with better boundaries

For data engineering specifically:

- Pipeline monitoring outside hours is planned on-call, not constant vigilance

- Automated alerting means I’m not checking dashboards anxiously

- Weekend deployments avoided through better planning

Frequently Asked Questions

Frequently Asked Questions

What's the difference between data engineering and data science, and which should I pursue?

Data engineers build the infrastructure that moves and stores data, while data scientists analyze data and build models. Data engineering emphasizes software engineering skills (Python, SQL, distributed systems) while data science emphasizes statistics and machine learning. Choose data engineering if you enjoy building systems, solving infrastructure problems, and enabling others' work. Choose data science if you prefer analysis, modeling, and working directly on business questions. Data engineering typically has more job openings, lower barriers to entry (no PhD required), and comparable compensation. Many successful professionals start in one field and transition to the other as their interests evolve.

How much SQL depth do I really need for data engineering interviews?

You need advanced SQL proficiency that goes well beyond basic queries. Expect interview questions covering window functions (ROW_NUMBER, RANK, LAG, LEAD, running totals), complex joins including self-joins, CTEs and recursive queries, query optimization and execution plan analysis, and handling edge cases with NULLs and data quality issues. Aim to solve 100+ practice problems, focusing on medium and hard difficulty. Beyond interviews, production data engineering requires understanding platform-specific features (Snowflake's semi-structured data, BigQuery's nested fields, etc.) and optimizing queries for cost and performance at scale.

Is the modern data stack (dbt, Snowflake, Fivetran, etc.) required knowledge, or can I get hired with traditional skills?

While traditional skills (SQL, Python, Airflow, Spark) remain valuable, modern data stack knowledge increasingly differentiates candidates. Many remote-friendly companies specifically seek experience with dbt for transformations, cloud-native warehouses like Snowflake or Databricks, and observability tools. However, fundamental skills matter most: a strong SQL/Python foundation transfers across tools. Focus on understanding why modern tools exist (what problems they solve) rather than just syntax. For career flexibility, aim for depth in fundamentals plus familiarity with modern approaches.

Can I transition from data analysis to data engineering, and how long does it take?

Yes, this is one of the most common paths into data engineering. Analysts already have SQL skills and understand business data needs. To transition, you'll need to develop Python programming beyond pandas, learn orchestration tools (start with Airflow), understand data modeling and warehousing concepts, and gain cloud platform experience. The transition typically takes 6-12 months of intentional skill building. Start by taking on engineering-adjacent tasks in your current role: automating reports, building simple pipelines, improving data quality processes. Build 2-3 portfolio projects demonstrating end-to-end pipeline development.

What's the difference between ETL and ELT, and which is more common now?

ETL (Extract, Transform, Load) transforms data before loading into the destination. ELT (Extract, Load, Transform) loads raw data first, then transforms within the destination warehouse. ELT has become dominant for analytics use cases because modern cloud warehouses (Snowflake, BigQuery, Databricks) provide powerful, scalable transformation capabilities. ELT advantages include preserving raw data for future needs, leveraging warehouse compute for transformations (often cheaper than dedicated transformation infrastructure), and enabling tools like dbt that transform SQL within the warehouse. However, ETL remains relevant when transformations are complex (requiring Python/Spark), when data volumes make warehouse transformation expensive, or when working with legacy systems.

How important is streaming experience for data engineering jobs?

Streaming (Kafka, Kinesis, Flink) is increasingly important but not required for most positions. Perhaps 30-40% of data engineering roles involve significant streaming work. Batch processing remains the majority of data engineering. That said, understanding streaming concepts differentiates senior candidates and opens doors to interesting problems (real-time analytics, fraud detection, IoT). Start with understanding Kafka fundamentals (topics, partitions, consumer groups), then explore stream processing with Spark Structured Streaming or Flink. You can build streaming experience through side projects even if your current job is batch-focused.

Should I learn Spark or focus on warehouse-based transformations (dbt)?

Both have value, but prioritize based on your target companies and data volumes. For most analytics use cases with data under 10TB, warehouse-based transformations with dbt are sufficient and increasingly preferred. Spark remains essential for very large scale data (10TB+), complex transformations requiring Python, ML feature engineering, and streaming use cases. Large tech companies and data-intensive startups value Spark expertise. Smaller companies and modern data teams may never touch Spark. Review job postings at your target companies to calibrate. Learning dbt first gets you productive quickly; add Spark when you encounter scale limitations or target roles that require it.

What cloud platform should I learn (AWS, GCP, or Azure)?

AWS has the largest market share and job market, making it the safest default choice. However, the best choice depends on your target companies. Large enterprises often use AWS or Azure. Modern startups and Google-ecosystem companies prefer GCP. Data-focused companies might use Snowflake or Databricks, which work across clouds. Learn one platform deeply rather than superficially knowing all three. Core concepts (storage, compute, networking, IAM) transfer across clouds. For data engineering specifically, focus on the data services: AWS (Redshift, Glue, EMR, Kinesis), GCP (BigQuery, Dataflow, Dataproc, Pub/Sub), Azure (Synapse, Data Factory, Databricks).

How do I demonstrate data engineering skills without professional experience?

Build portfolio projects that showcase end-to-end pipeline development. Strong projects include: (1) An ingestion pipeline pulling data from a public API, transforming with Python, and loading to a warehouse (Snowflake free trial or BigQuery sandbox). (2) An Airflow DAG orchestrating multiple data sources with error handling and monitoring. (3) A dbt project with tests, documentation, and modular design. (4) A streaming project processing real-time data (Twitter API, financial data). Publish code on GitHub with thorough README documentation. Write blog posts explaining your design decisions. Contribute to open-source data tools. These demonstrate practical skills that many employed engineers lack.

What's the interview process like for remote data engineering roles?

Typical process spans 5-7 rounds over 3-6 weeks: (1) Recruiter screen covering background, compensation expectations, and remote work experience. (2) Technical phone screen with SQL coding and basic Python questions. (3) Take-home assignment or additional coding round involving pipeline design or data transformation. (4) System design interview discussing data platform architecture for senior roles. (5) Behavioral interviews focusing on collaboration, problem-solving, and remote work skills. (6) Hiring manager deep dive on experience and team fit. (7) Final panel or executive conversation. Remote-specific aspects include emphasis on written communication, async collaboration examples, and home office setup questions.

Are data engineering bootcamps worth it?

Bootcamps can accelerate learning but aren't required. Quality varies significantly; research outcomes and curriculum carefully. Advantages include structured learning path, projects with feedback, networking, and job placement support. Disadvantages include cost ($10K-$20K), variable quality, and some employer skepticism. Alternatives include self-study with online courses (DataCamp, Coursera), free resources (Airflow tutorials, dbt documentation), and building portfolio projects independently. The most successful bootcamp graduates treat it as a starting point, not a complete education, and continue learning independently. If you're self-motivated and can structure your own learning, bootcamps may not be necessary.

How do data engineering salaries compare between remote and on-site positions?

Remote data engineering salaries are highly competitive and sometimes exceed on-site equivalents. Remote-first companies like GitLab, Zapier, and Stripe often pay location-agnostic salaries based on expensive markets (SF/NYC rates regardless of where you live). Large tech companies typically adjust 15-40% based on location, though senior engineers can negotiate better terms. The trend favors candidates: talent scarcity means companies increasingly compete on compensation regardless of location. Total compensation including equity at remote-first companies often matches or exceeds on-site roles. Research each company's philosophy using Levels.fyi, Glassdoor, and team member blogs.

Your Next Steps: Landing a Remote Data Engineering Job

The remote data engineering job market offers exceptional opportunities for those willing to develop the required skills. Competition exists, but talent scarcity means qualified candidates have significant leverage.

Immediate Actions

- 1 Assess your current skill level against job requirements

Review 10-15 remote data engineering job postings and note recurring requirements

- 2 Strengthen SQL skills with advanced practice

Complete 100+ problems on LeetCode, HackerRank, or DataLemur focusing on window functions and complex joins

- 3 Build Python proficiency for data engineering

Focus on pandas, file handling, API interaction, and testing practices

- 4 Learn a modern orchestration tool

Start with Airflow (largest market share) using the official tutorial and Astronomer's guides

- 5 Get hands-on with a cloud data warehouse

Use Snowflake's free trial or BigQuery's sandbox to practice warehouse operations

- 6 Complete a dbt fundamentals course

dbt's own free course covers essentials in ~10 hours

- 7 Build 2-3 substantial portfolio projects

End-to-end pipelines with documentation, testing, and clear business context

- 8 Document your projects thoroughly on GitHub

README files, architecture diagrams, and code comments demonstrate communication skills

- 9 Optimize LinkedIn for remote data engineering keywords

Include relevant technologies, remote work experience, and set location to Remote

- 10 Create a target company list of 20-30 remote-friendly employers

Research their tech stacks, cultures, and recent job postings

- 11 Practice system design and behavioral questions

Review common patterns and prepare STAR format stories about your experience

- 12 Apply to 5-10 positions per week with customized materials

Quality applications beat mass applications for remote roles with high competition

Career Development Path

Months 1-3: Foundation building. Focus on SQL mastery, Python proficiency, and understanding data warehousing concepts. Complete at least one substantial project.

Months 4-6: Expand toolkit. Learn Airflow or another orchestrator. Get comfortable with dbt. Build a second project demonstrating pipeline orchestration.

Months 7-9: Deepen expertise. Explore Spark basics, streaming concepts, or advanced data modeling. Build a more complex project incorporating multiple technologies.

Month 10+: Active job search. Apply consistently, iterate on interview performance, and continue learning from each experience.

Related Guides

Your remote data engineering journey connects to several other career paths and skill areas:

- Remote Backend Developer Jobs - Backend skills transfer directly to data engineering, and many engineers move between these roles

- Remote Machine Learning Engineer Jobs - ML engineering is a natural progression for data engineers interested in applied AI

- Remote Engineering Jobs Overview - Compare data engineering to other engineering specializations

- Remote Interview Guide - General preparation for remote technical interviews

- Negotiating Remote Salary - Maximize your compensation once you receive offers

Data engineering represents one of the best opportunities in remote work: high compensation, strong demand, and work that’s inherently suited to distributed teams. Whether you’re transitioning from analysis, backend development, or starting fresh, the path is accessible with deliberate skill building and consistent effort.

The organizations that will define the next decade of business are building data-driven products and operations. Data engineers make that possible. Your skills are valuable, and companies are actively seeking remote talent with the capabilities you’re developing.

Get the Remote Data Engineering Career Kit

Weekly curated remote data engineering jobs, SQL challenges, and career advice delivered to your inbox.

Frequently Asked Questions

How do I find remote data engineer jobs?

To find remote data engineer jobs, start with specialized job boards like We Work Remotely, Remote OK, and FlexJobs that focus on remote positions. Set up job alerts with keywords like "remote data engineer" and filter by fully remote positions. Network on LinkedIn by following remote-friendly companies and engaging with hiring managers. Many data engineer roles are posted on company career pages directly, so identify target companies known for remote work and check their openings regularly.

What skills do I need for remote data engineer positions?

Remote data engineer positions typically require the same technical skills as on-site roles, plus strong remote work competencies. Essential remote skills include excellent written communication, self-motivation, time management, and proficiency with collaboration tools like Slack, Zoom, and project management software. Demonstrating previous remote work experience or the ability to work independently is highly valued by employers hiring for remote data engineer roles.

What salary can I expect as a remote data engineer?

Remote data engineer salaries vary based on experience level, company size, location-based pay policies, and the specific tech stack or skills required. US-based remote positions typically pay market rates regardless of where you live, while some companies adjust pay based on your location's cost of living. Entry-level positions start lower, while senior roles can command premium salaries. Check our salary guides for specific ranges by experience level and geography.

Are remote data engineer jobs entry-level friendly?

Some remote data engineer jobs are entry-level friendly, though competition can be high. Focus on building a strong portfolio or demonstrable skills, contributing to open source projects if applicable, and gaining any relevant experience through internships, freelance work, or personal projects. Some companies specifically hire remote junior talent and provide mentorship programs. Smaller startups and agencies may be more open to entry-level remote hires than large corporations.

Continue Reading

Remote Machine Learning Engineer Jobs: Complete 2026 Career Guide

Everything you need to land a remote ML engineer job. AI, deep learning, MLOps - salary data, interview questions, and companies hiring.

Remote Data Analyst Jobs 2026: Analytics, BI & Data Science

Guide to remote data positions including technical skills, portfolio projects, and interviews.

Remote Backend Developer Jobs: Complete 2026 Career Guide

Everything you need to land a remote backend developer job. Salary data by seniority, interview questions, companies hiring, and career paths.

Land Your Remote Job Faster

Get the latest remote job strategies, salary data, and insider tips delivered to your inbox.